The pitch for a fully autonomous SOC is compelling on paper. AI handles detection, investigation, and response end to end. Analysts step back. Alert queues empty themselves. The staffing crisis becomes irrelevant.

In practice, full autonomy introduces risks that most vendors understate: compounding errors without human correction, skills erosion across the team, and blind spots when AI encounters threats outside its training data. The organizations seeing the best results are not removing humans from the loop. They are repositioning them above it, directing AI agents that execute at machine scale while analysts retain the oversight and strategic judgment that keep security programs effective.

What does a fully autonomous SOC actually look like?

The "autonomous SOC" is an industry concept, not a single product. It describes a spectrum of automation where AI handles increasingly more of the detection and response workflow with decreasing human involvement, from basic SOAR playbooks to full end-to-end automation with no human touchpoints.

The appeal is real. 62% of SOC professionals say their organization is not doing enough to retain top talent (SANS 2025). 48% of cybersecurity professionals report exhaustion staying current with threats and emerging technologies, and 47% feel overwhelmed by workload (ISC2 2025). When teams are understaffed and overwhelmed, the promise of automation that handles everything is hard to resist.

94% of cybersecurity leaders say AI is the most significant driver of change in cybersecurity (WEF 2026), and 77% of organizations have already adopted AI-enabled tools for cybersecurity objectives (WEF 2026). The category is mainstream. The question is no longer whether to adopt AI, but how much autonomy to grant it.

The autonomy spectrum in security operations

Not every SOC sits at the same point on this spectrum. The progression typically moves through five levels:

- Manual triage — analyst handles every alert

- SOAR playbooks — predefined decision trees automate known workflows

- AI-assisted investigation — AI gathers evidence, analyst decides

- Agentic AI with human oversight — AI investigates and concludes, analyst reviews and directs

- Fully autonomous operation — no human review at any stage

Most organizations are somewhere in the middle. Only 30% have AI/ML as a formally defined part of SOC operations (SANS 2025). The gap between "we use some AI tools" and "AI runs our SOC autonomously" is large, and the risks of jumping to the far end of the spectrum are significant.

Where does full autonomy break down?

Full autonomy fails at three critical points: when errors compound without human correction, when the talent pipeline erodes, and when novel threats fall outside the AI's training data.

Error compounding without human checkpoints

When an AI agent operates at full autonomy, a single misclassification can cascade. A true-positive alert closed as benign does not just create one missed detection. It creates a gap in the investigation chain that downstream processes inherit. Without human checkpoints, there is no correction mechanism until the damage surfaces, often weeks or months later.

This is not a theoretical risk. Forrester predicts that an agentic AI deployment will cause a publicly disclosed data breach in 2026, noting that autonomous systems "sacrifice accuracy for speed of delivery" (Forrester 2026 Predictions). Speed without accuracy is not an efficiency gain. It is a liability.

Skills erosion and the talent pipeline problem

When Tier-1 and Tier-2 investigation work is fully automated, junior analysts never build the investigative instincts they need to become senior analysts. The pipeline dries up. The organization becomes dependent on a shrinking pool of senior staff who themselves have fewer opportunities to mentor and coach.

88% of organizations experienced at least one significant cybersecurity event due to skills deficiencies (ISC2 2025). Full autonomy does not solve the skills gap. It masks it in the short term while making it worse in the long term.

The trust problem with black-box automation

SOC teams will not trust what they cannot verify. Fully autonomous systems that lack transparency in their reasoning create adoption resistance and audit concerns. When an AI closes an alert, the analyst needs to understand why. When a regulator asks how a security decision was made, "the AI decided" is not a sufficient answer.

41% of organizations cite the need for human validation of AI-generated security responses before implementation as an adoption hurdle (WEF 2026). This is not resistance to AI. It is a reasonable demand for accountability. As Gartner notes, "to realize the full potential of AI in security operations, cybersecurity leaders must prioritize people as much as technology" (Gartner Top Cybersecurity Trends 2026).

Why does the agentic model work better?

The agentic model pairs AI autonomy for routine, well-defined tasks with human oversight for high-stakes decisions. AI agents investigate alerts, gather evidence across tools, and reach conclusions at machine speed. Analysts review findings, direct strategic hunting, and make the decisions that require organizational context and judgment.

This is not a compromise. It is how you get the speed of automation and the judgment of experienced analysts working together. Only 1 in 5 companies has a mature model for governance of autonomous AI agents (Deloitte Tech Trends 2026), which means the organizations that build this governance framework now will have a significant advantage.

Human-in-the-loop vs. human-on-the-loop

Within the agentic model, oversight itself operates on a spectrum. The distinction matters for how teams adopt AI and how quickly they realize its benefits.

Human-in-the-loop (HITL) means a human is in the critical path. The AI investigates and reaches a conclusion, but the process stops until an analyst takes action: approving a closure, confirming a finding, or authorizing a response. Nothing moves forward without human approval.

Human-on-the-loop (HOTL) means a human has full visibility into everything the AI does but is not required to advance the process. The AI proceeds autonomously while analysts supervise, reviewing conclusions and intervening when needed rather than gating every action.

Most organizations start with HITL. It builds trust. Analysts see the AI's reasoning, verify its conclusions, and develop confidence in its accuracy. Over time, as that confidence grows, teams graduate to HOTL for well-understood alert types, letting the AI auto-close benign investigations and initiate response actions while analysts shift their attention to the exceptions and strategic work that require human judgment.

This progression is not about removing humans. It is about repositioning them from gatekeepers to supervisors, freeing capacity without sacrificing oversight.

Where AI excels and where humans must lead

AI handles the work that benefits from speed, scale, and consistency:

- Routine alert triage and investigation

- Evidence gathering across multiple tools

- 24/7 coverage without fatigue

- Investigation of 100% of alerts, not just high-priority ones

Humans handle the work that requires judgment, context, and creativity:

- Novel threat assessment

- Policy decisions about response actions

- Sensitive escalations

- Strategic threat hunting direction

- Coaching the AI on what matters to the organization

The data supports this division. Organizations with extensive AI use in security average $3.62 million per breach compared to $5.52 million without AI (IBM 2025). The benefit comes from AI handling volume at scale, not from removing humans from oversight.

How do you find the right balance for your team?

The right balance depends on your team's maturity, your alert volume, and how much trust you have built with your AI tooling. The HITL-to-HOTL progression provides a practical adoption path: start with human-in-the-loop controls, then graduate to human-on-the-loop as confidence builds.

Start with human-in-the-loop where the pain is greatest. Routine Tier-1 alert triage consumes the most analyst hours and benefits most from automation. 85% of SOCs cite endpoint security alerts as their primary response trigger (SANS 2025), making them a natural starting point. Begin with HITL: let the AI investigate, but require analyst approval before closing alerts or triggering response actions.

Graduate to human-on-the-loop as trust builds. Once your team has verified the AI's reasoning across hundreds of investigations, shift well-understood alert types to HOTL. Let the AI auto-close benign investigations and initiate routine response actions while analysts monitor outcomes and intervene on exceptions. This is where the real capacity gains emerge.

Build trust through transparency. Choose AI that documents its reasoning step by step. If your team cannot review how the AI reached a conclusion, you do not have oversight. You have hope. Transparency is what makes the HITL-to-HOTL transition possible.

Preserve the talent pipeline. Ensure junior analysts still get exposure to investigative work through HITL workflows. Use AI to coach and uplevel analysts, not bypass them.

Measure what matters. Track Mean Time to Conclusion (MTTC) across both HITL and HOTL workflows to validate that your progression is producing better outcomes, not just faster closures.

A practical framework for SOC automation decisions

The Agentic SOC model operationalizes this framework. AI agents handle the triage and evidence gathering at machine scale. Analysts start with full approval authority and graduate to a supervisory role as trust builds. Neither side works as well alone.

Key takeaways

- Full SOC autonomy introduces real risks: compounding errors, skills erosion, and blind spots with novel threats. Speed without accuracy is a liability, not an efficiency gain.

- The agentic model pairs AI execution speed with human judgment and oversight, delivering better security outcomes than either approach alone.

- Human-in-the-loop (analyst approves) and human-on-the-loop (analyst supervises) are distinct operating modes. Start HITL to build trust, graduate to HOTL for well-understood alert types as confidence grows.

- Start automation where the pain is greatest (Tier-1 alert triage), build trust through transparent AI reasoning, and preserve the talent pipeline by keeping analysts engaged in investigative and strategic work.

- Measure success by outcomes (MTTC, breach cost reduction), not just automation coverage.

See the agentic model in action

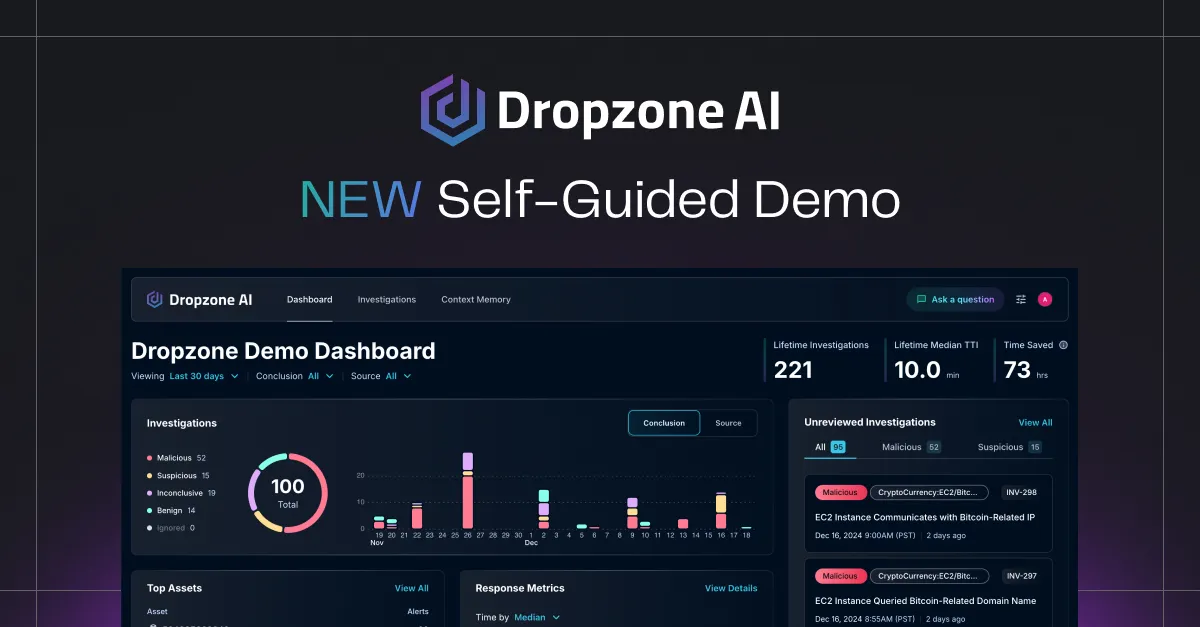

Dropzone AI's Agentic SOC platform operationalizes the balance this article describes. AI agents investigate alerts at machine speed with full transparency into their reasoning. Start with human-in-the-loop controls where analysts approve closures and response actions. Then graduate to human-on-the-loop as confidence builds, letting Dropzone auto-close benign investigations and initiate routine responses while your team supervises and focuses on strategic work.

See how Dropzone's Agentic SOC balances AI autonomy with analyst oversight →