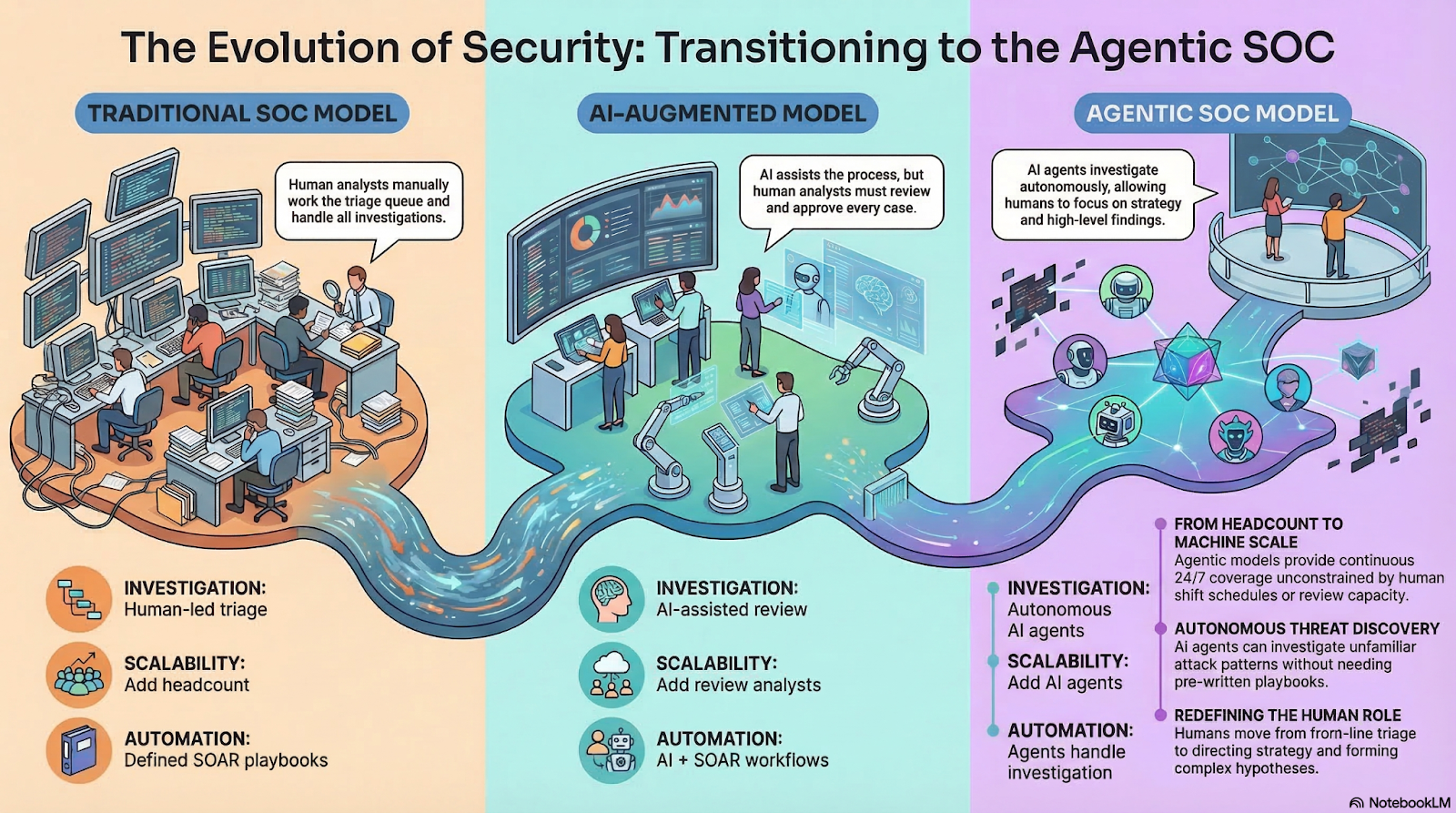

Most security teams are fighting a math problem, not a skills problem. The volume of alerts modern environments generate has outpaced what human analysts can investigate, while attackers compress the time between initial access and impact. Hiring more analysts scales linearly. The threat surface scales exponentially.

The agentic SOC model is built for that asymmetry. Rather than routing every alert through a human triage queue, it deploys AI agents that investigate autonomously, around the clock, using the same tools your analysts use today. Analysts remain central, focused on strategy and acting on findings rather than working the queue.

This shift is happening now for a specific reason. Attackers are using AI to scale their operations faster than defenders can respond with human capacity. Alert volumes have grown beyond what any team can investigate manually. Earlier automation tools addressed parts of this problem, but not all of it. The agentic model is built for what those tools left unresolved.

This article covers what the agentic SOC model is, how it differs from earlier operational approaches, and what it means for security teams in practice.

Why Are Traditional Security Operations Approaches Struggling to Keep Up?

The core challenge in modern security operations is a coverage gap. Security teams have more data and telemetry than ever, but not the capacity to act on it.

The numbers make the gap concrete:

- 40% of security alerts go uninvestigated (SACR 2025 AI SOC Market Landscape) — not because analysts aren't trying, but because volume has outpaced capacity

- 73% of security teams cite excessive false positives as their top detection challenge (SANS 2025 Detection and Response Survey) — analysts spend more time ruling things out than acting on real threats

- Enterprise Security Information and Event Management (SIEM) tools cover only 21% of MITRE ATT&CK techniques despite having telemetry for over 90% of the environment (CardinalOps 2025 research via Help Net Security) — the detection gap is structural, not a configuration problem

Security teams have the data. They do not have the capacity to use it.

SOAR platforms were designed to help by automating specific response workflows. They work well for defined, repeatable scenarios. The problem is that SOAR requires humans to anticipate every attack scenario and write a playbook in advance. When a novel threat appears, playbooks fail and the work falls back to manual investigation. SOAR addresses throughput for known scenarios. It does not address the coverage gap for unknown ones.

The agentic SOC model addresses the gap differently. AI agents can investigate threats that no playbook anticipated, work every alert regardless of shift schedule, and deliver findings with full context for human analysts to review and act on.

What Does "Agentic" Actually Mean?

What does "agentic" actually mean in a security context?

"Agentic" refers to AI systems that can perceive their environment, reason about what they observe, plan multi-step actions, and execute those actions autonomously, without requiring human direction at each decision point.

This is distinct from earlier forms of AI and automation:

In a security context, this means an AI agent can receive an alert, query multiple data sources, evaluate the evidence, reach a conclusion, and deliver a full investigation report without a human touching it. The defining characteristic is autonomous action. This is what separates the agentic model from human-in-the-loop models where every conclusion routes through a human reviewer before anything happens.

The practical implication: under the agentic model, AI agents can investigate every alert, not just the ones that rise to the top of a human analyst's queue. Coverage is no longer constrained by headcount.

How the Agentic SOC Model Compares to Other Approaches

The agentic SOC is a model for how security operations work is structured and executed. The most meaningful comparisons are between operational models, not between a model and a tool. Here is how the three primary models differ:

How does the agentic model differ from managed detection and response (MDR)?

Managed Detection and Response (MDR) outsources detection and response to a managed service staffed by human analysts at a third party. The agentic SOC model keeps detection and response in-house, with AI agents handling front-line investigation and human analysts retaining full visibility and control over strategy. These are not competing choices for every organization; some teams use both. The distinction is where the work happens and who controls it.

How does the agentic model differ from AI-augmented, human-in-the-loop models?

Some AI security products route every case through a human reviewer before a conclusion is delivered. This approach improves analyst throughput but creates a new bottleneck: coverage is still constrained by how fast humans can review AI outputs. The agentic model removes that bottleneck. AI agents deliver conclusions directly, with every reasoning step logged and available for analyst review after the fact. Analysts are in the loop strategically, not operationally for every case.

What is SOAR's role in an agentic SOC model?

SOAR is a tool, not a competing model. SOAR handles defined, repeatable response workflows well, and those workflows do not disappear when an organization adopts the agentic model. Most implementations run SOAR and AI agents together: SOAR manages specific automated response actions (isolating an endpoint, closing a ticket, notifying a team), while AI agents handle the investigation and reasoning that SOAR cannot do. The agentic model changes what AI agents are responsible for, not whether SOAR has a role.

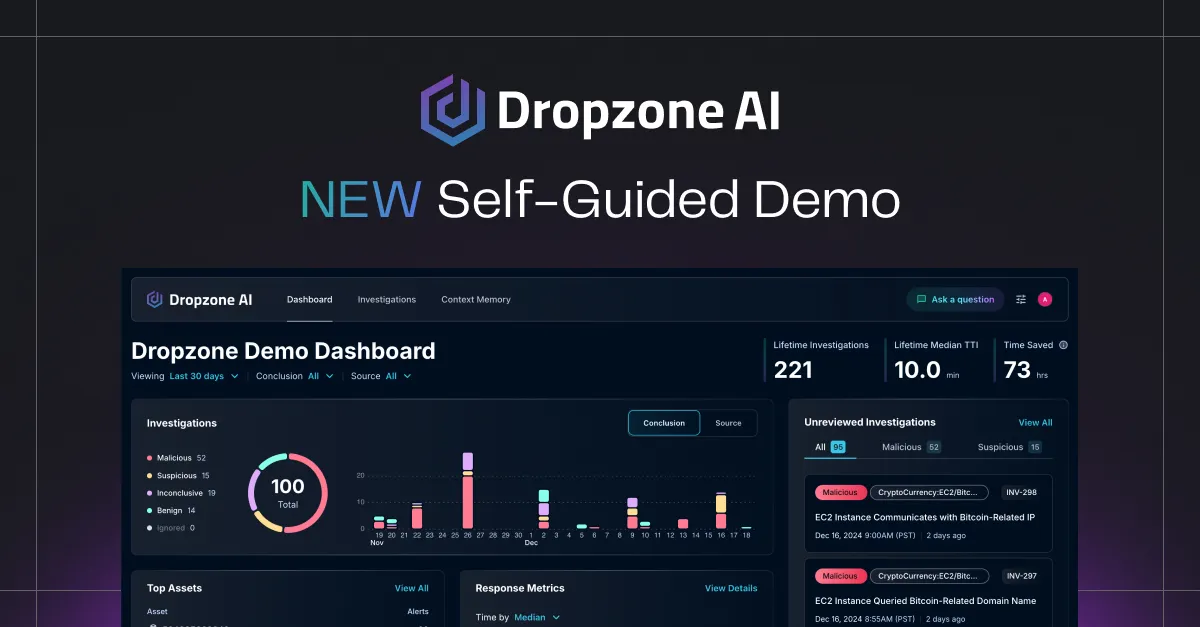

How Dropzone AI Implements the Agentic SOC Model

The agentic SOC model can be implemented with a team of specialized AI agents, each focused on a specific function within the detection and response cycle. At Dropzone AI, the current and planned agent roster includes:

- AI SOC Analyst: Investigates every alert across the full tool stack, 24/7. This is the core agent, available now. It replicates the techniques of an experienced analyst: querying SIEM, EDR, identity, cloud, and email sources; correlating evidence; and delivering a complete investigation with an escalation recommendation.

- AI Threat Hunter (Q2 2026): Runs hypothesis-driven threat hunts across your full environment. Compresses 10 to 20 hours of manual hunting work into approximately one hour.

- AI Threat Intel Analyst (Q2 2026): Processes threat intelligence 24/7, creating pre-built investigation templates for emerging threats and vulnerabilities as they surface.

- Coming: AI Detection Engineer, AI Security Data Engineer, and AI Forensic Analyst are on the roadmap with availability to be announced.

To make this concrete: when an alert fires at 2 AM, here is what happens under Dropzone's implementation of the agentic model:

- The AI SOC Analyst receives the alert immediately

- It queries your SIEM for historical activity around the same user or IP

- It checks your EDR for endpoint behavior and pulls threat intelligence on related indicators

- It cross-references identity and cloud logs to build a complete evidence set

- Within minutes, it delivers a full investigation (what happened, what evidence was gathered, what the conclusion is, and whether escalation is warranted), with every step logged and visible for analyst review

No analyst had to wake up. No alert sat in a queue until morning.

What distinguishes Dropzone's implementation from a collection of single AI tools is the collaboration architecture. All agents operate on a shared foundation:

- Common reasoning model — agents apply consistent security domain knowledge across every investigation

- Shared tool integrations — every agent can query the same security stack without duplication or gaps

- Company context — agents build and share knowledge of the organization's environment over time

- External threat intelligence — current threat data informs every investigation across the full agent team

Because agents share this foundation, they can task each other. One agent that surfaces a suspicious lateral movement pattern can direct another to pursue that hypothesis across the full environment. That handoff would require manual coordination between human analysts without agents collaborating in this way. Capability expands as the agent team grows.

Dropzone supports 90+ integrations. AI agents use all tools in the existing stack, just as human analysts do, with no data normalization, log migration, or playbook configuration required to get started.

Why Is the Agentic SOC Model Viable Right Now?

The concept of AI agents doing security work is not new. What changed is that three converging factors brought the model from theoretical to operational.

LLMs matured for security reasoning. Large language models can now reason across security context, interpret threat intelligence, correlate evidence across disparate data sources, and plan multi-step investigations. Earlier AI models could not do this reliably enough to operate in a production SOC environment.

Integration depth exists. Modern security stacks are API-accessible. SIEM, EDR, cloud platforms, and identity providers all expose data that AI agents can query at scale. This was not true five years ago.

Adversarial AI pressure is acute. AI-assisted attacks are scaling faster than human defenders can respond. The asymmetry is visible in alert volumes, dwell times, and the speed at which new exploits propagate. Machine-speed offense requires machine-speed defense.

The deployment data reflects that the model has crossed the viability threshold. Dropzone AI has reached 300+ deployments, including ECS, a top-5 Managed Security Service Provider (MSSP) in North America, which sends 30,000 alerts per month through Dropzone. Gartner named Dropzone a Cool Vendor for the Modern SOC and listed it as a sample vendor in the 2025 Hype Cycle for Security Operations.

Outcome data from production deployments is specific:

- 85% reduction in manual alert investigation (Zapier case study)

- 5x faster Mean Time to Respond (MTTR) (Indiana Farm Bureau and Pipe case studies)

- 90% faster escalated investigations (Pipe case study)

These are measured results from live deployments, not projections.

For organizations tracking these outcomes in their own environment, see Threat Hunting Metrics for a framework on what to measure.

Key Takeaways

- The agentic SOC is a security operations model in which AI agents handle front-line investigation work and human analysts direct strategy. It is a model for how detection and response work is structured, not a product.

- The model differs from the traditional SOC approach (human-heavy triage), MDR (outsourced human analysts), and AI-augmented models (AI assists, human reviews each case). The agentic model removes the human bottleneck from individual investigations.

- SOAR is a tool, not a competing model. Most organizations implementing the agentic SOC model continue using SOAR for defined response workflows alongside AI agents that handle investigation.

- Dropzone AI's implementation of the agentic model includes a team of specialized AI agents (AI SOC Analyst, available now; AI Threat Hunter and AI Threat Intel Analyst, Q2 2026) that collaborate, share context, and expand coverage as the team grows.

- Human analysts are essential to the model. Their role shifts from working the alert queue to directing agents, forming hypotheses, and advancing the security program.

- The model is viable now because LLMs matured for security reasoning, modern stacks expose the integration depth agents need, and adversarial AI pressure has made machine-speed defense a necessity.