Introduction

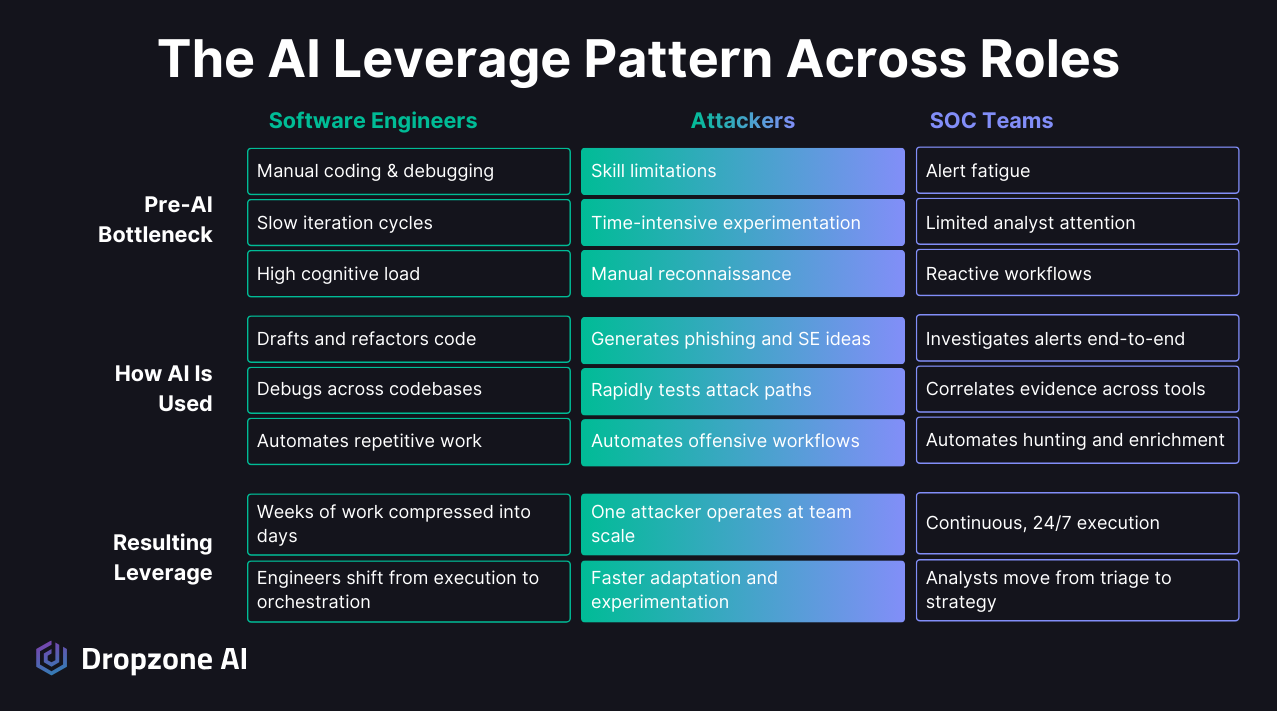

AI has already delivered step-change gains for software engineers and attackers. Engineers use AI to draft code, refactor large projects, debug complex logic, and reason across entire codebases, compressing weeks of work into days. Attackers apply the same leverage to scale reconnaissance, refine phishing campaigns, and experiment rapidly without deep expertise. Both groups have pushed past a long-standing constraint: human cognitive limits. SOC teams face the same bottleneck today: limited analyst attention, alert fatigue, and manual investigations. In this article, we will examine how engineers and attackers achieved these gains and how SOC teams can unlock similar machine-scale execution without replacing human analysts.

How Are Software Engineers Using AI to Move Faster?

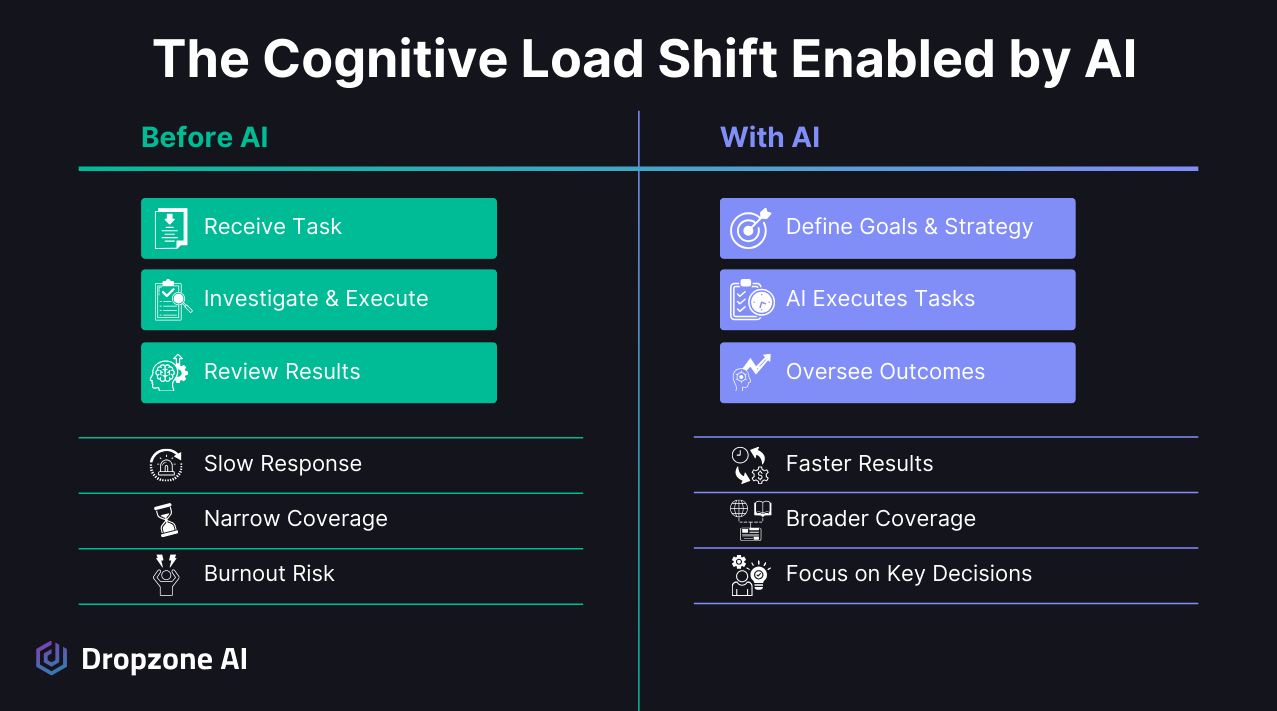

Engineers are using AI to shift from typing every instruction to directing and reviewing machine-generated output, which collapses weeks of work into days and concentrates human effort on intent, correctness, and design. The productivity gain comes from removing routine manual coding tasks.

How Did Engineers Shift from Doing the Work to Guiding It?

Modern engineers are no longer writing every line of code manually. Tools like Claude Code and Cursor are used to draft new features, refactor legacy modules, debug complex logic, generate tests, and reason across large codebases.

Instead of manually searching through files and stitching together context, engineers can prompt the system to analyze dependencies, identify inconsistencies, or suggest architectural improvements. The human engineers are still providing the high-level direction and reviewing output but are freed from writing the code themselves.

The shift is subtle but powerful; software engineers move from typing every instruction to directing and reviewing AI output. Productivity gains come from:

- Faster iteration cycles across features, refactors, and tests.

- Less context switching between files and tools.

- Offloaded cognitive work such as boilerplate generation and pattern matching.

Human effort concentrates on defining intent, validating correctness, and shaping design decisions, while the AI handles execution-heavy tasks at machine speed.

What Should SOC Teams Notice in the Software Engineering Shift?

The engineering pattern translates directly to security: humans set direction and judgment, AI handles repetitive execution, and accountability stays with the analyst. That same division of labor is what unlocks machine-scale investigation in the SOC.

Engineers did not disappear when AI entered the workflow. Their role evolved; instead of functioning primarily as manual builders, they became orchestrators who define goals, constraints, and quality standards. The work shifted from producing every artifact directly to supervising and refining machine-generated output.

In this model, humans provide direction and judgment. They decide what problem to solve, what tradeoffs are acceptable, and whether the output aligns with broader system architecture.

The AI executes repetitive steps, explores variations, and processes large volumes of information without fatigue. This division of labor increases output without removing human accountability.

The same pattern applies cleanly to security operations. Analysts already define investigation strategy, risk tolerance, and escalation criteria. If AI can execute investigations at machine scale while analysts retain oversight and decision authority, the SOC can move beyond manual alert handling toward a higher-leverage operating model built around direction, validation, and strategic control.

How Are Attackers Using AI to Scale?

Attackers are using AI agents as offensive copilots to generate phishing variants, generate and refine malware payloads, analyze systems for vulnerabilities, and cluster targets, which lowers skill barriers and lets a single operator run campaigns with the leverage of a small team. With the announcement of Mythos from Anthropic, It’s now accepted that with AI, attackers are no longer limited by human cognitive ability but rather by the number of tokens they can afford. The result is measurable asymmetry against defenders that still largely work manually.

What Is "Vibe Hacking" and Agent-Enabled Offense?

Instead of drafting phishing emails manually, attackers prompt a model to generate phishing variants tailored and refined across multiple dimensions:

- Role, industry, or current-event targeting for each variant.

- Tone adjustments to match the target audience's register.

- Localization for language and regional context.

- Psychological framing techniques designed to increase click-through rates.

What used to take hours of work can now be produced and optimized in minutes.

AI also accelerates technical experimentation when it comes to exploiting vulnerabilities. An attacker can ask an agent to:

- Analyze an error message returned from a failed exploit attempt.

- Suggest alternate payload structures when the first approach fails.

- Modify code to evade common detection signatures.

Even if the output requires editing, it provides a structured starting point that reduces research time, and model-driven feedback loops let attackers iterate rapidly without deep background knowledge in exploit development or scripting.

The result is disproportionate leverage; a single operator can coordinate:

- Infrastructure setup for command-and-control and delivery.

- Phishing content generation tailored by role and industry.

- Malware payload refinement against common detection signatures.

- Reconnaissance queries across targets and attack surface data.

Agents can help parse leaked credential dumps, summarize reconnaissance findings, or cluster targets based on likely susceptibility. Skill thresholds drop while experimentation velocity increases, which expands both the frequency and diversity of attack attempts.

It’s important to remember that cybercriminals are like other businesses, and that if they can increase the productivity of their human employees, then it’s a pragmatic business decision for them.

Why Are Defenders Falling Behind?

Defenders fall behind because attackers operate at machine speed while SOCs absorb that pressure at human pace. When reconnaissance, payload design, and campaign iteration all accelerate, manual alert triage cannot keep up without an autonomous investigation layer.

This shift creates measurable asymmetry for attackers to gain speed in reconnaissance, creativity in payload design, and scale in campaign execution without expanding their team.

They can generate more variations per campaign, adapt messaging based on response patterns, and test evasive techniques against common controls in compressed timeframes. The feedback cycle tightens, and iteration becomes continuous.

Traditional SOCs, however, often remain constrained by analyst attention and manual pivoting across tools. Alerts are triaged one by one, context is gathered through sequential queries, and investigations stall when queues grow.

When adversaries operate with machine-assisted experimentation and defenders rely primarily on human-paced analysis, the imbalance compounds. Without an autonomous, AI-driven investigation layer, defenders are forced to absorb machine-scale pressure with human-scale resources.

What Pattern Shows Up Across Every Role?

The same pattern recurs across engineers, attackers, and SOC teams: manual execution gives way to machine-scale leverage, and humans become the directors rather than the throughput constraint.

When you line these groups up side by side, a clear pattern emerges. Software engineers, attackers, and SOC teams all benefit from AI in fundamentally the same way by shifting from manual execution to machine-scale leverage.

How Can SOC Teams Catch Up?

SOC teams can catch up by adopting the same human-directed automation pattern: AI agents run investigations end-to-end across SIEM, EDR, cloud, and identity systems while analysts move from alert triage to strategic oversight. The cognitive ceiling lifts once execution runs at machine scale.

How Do Analysts Move from Chasing Alerts to Setting Strategy?

SOC teams are dealing with the same cognitive ceiling that engineers faced before AI-assisted development became mainstream. On any given shift, analysts:

- Juggle dozens of alerts at once.

- Pivot across multiple tools to chase each one.

- Manually gather context from SIEM, EDR, cloud, and identity systems.

- Synthesize findings under time pressure before the queue grows.

The constraint is not effort or expertise; it is the finite capacity of human attention. As alert volume grows and environments become more interconnected, that bottleneck becomes more visible.

AI SOC Analysts change the shape of the workload. A properly designed system can investigate alerts end-to-end, pivot across SIEM, EDR, cloud, and identity systems, correlate evidence, and assemble structured conclusions without waiting for manual queries at each step.

Instead of spending hours collecting and organizing telemetry, analysts review decision-ready outputs and focus on validation, risk assessment, and escalation. The shift moves the SOC from reactive triage toward higher-value oversight and strategic control.

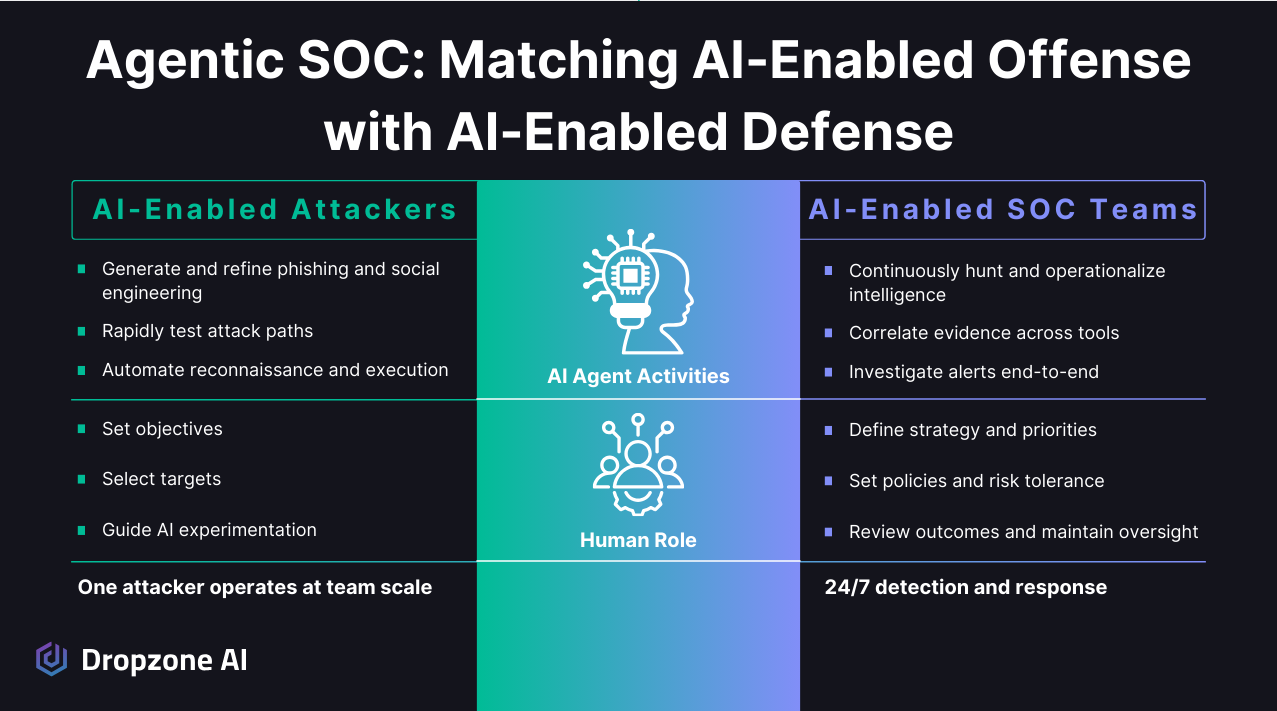

Why Is the Agentic SOC Model the Defensive Parallel?

The agentic SOC model is the defensive parallel because it applies the same orchestration patterns that engineers and attackers already use: AI agents operate continuously with defined roles, humans retain authority over policy and escalation, and investigations run at machine scale without removing human judgment.

Under the agentic SOC model, a team of AI agents operates continuously with defined roles. One may focus on alert investigation, another on proactive threat hunting, and another on operationalizing new intelligence across detections and response workflows. Read about Dropzone’s AI Threat Hunter and AI Threat Intelligence Analyst.

These agents collaborate in real time, sharing findings and updating context as new signals emerge. Because the agents can query systems programmatically and reason over large volumes of data, investigations are no longer linear or limited to a single console.

An alert can trigger a chain of hypothesis-driven exploration across identity logs, endpoint telemetry, cloud activity, and historical patterns. The AI can revisit assumptions as new evidence appears, reducing blind spots that often occur in manual workflows.

Humans remain in control of priorities, policies, and acceptable risk thresholds. They define:

- What constitutes escalation and when a case must reach an analyst.

- What actions can be automated versus held for human review.

- How findings should be handled operationally across downstream workflows.

The AI executes continuously at machine scale, handling repetitive investigative work and maintaining momentum across the environment. This division of labor allows SOC teams to match the speed and scale already being leveraged by engineers and attackers, without removing human judgment from the loop.

Conclusion

Software engineers have already demonstrated that AI expands human capability by removing cognitive bottlenecks and accelerating execution. Attackers have shown that the same technology can create an asymmetric advantage through speed, scale, and experimentation. SOC teams now have the opportunity to reclaim that balance by adopting the agentic SOC model, with AI agents that execute investigations at machine scale. The future of security operations is not about replacing analysts, but about equipping them with machine-scale investigation and execution while they retain judgment and control. Defenders do not need a different class of AI; they need to apply it with the same leverage and operational intent. Experience it firsthand in our self-guided demo and explore how AI delivers machine-scale investigation without replacing human control.

.png)