You ran the proof of concept. The AI SOC agents investigated alerts, the results looked solid, and leadership approved a production rollout. Now they're live, processing real alerts alongside your team.

Most content about AI in the SOC stops here. It'll tell you about faster mean time to respond (MTTR) and fewer false positives, and that's true. But it's only the beginning of what actually changes.

The 11 outcomes below come from organizations running Dropzone AI in production, including managed security service providers (MSSPs) processing tens of thousands of alerts per month.

The SANS 2025 Detection and Response Survey found that 73% of security teams cite excessive false positives as their top detection challenge. AI agents address that problem directly. But what happens after you fix false positive overload is where the real operational shifts begin.

Here's what organizations report changing across Tier 1 operations, SOC KPIs, team capacity, downstream escalation quality, and deployment.

How Does AI Investigation Change Tier 1 Operations?

This is the first thing teams notice. The constant background noise drops.

AI agents investigate and close routine, low-risk alerts autonomously. What's left for human analysts are the outliers, the alerts that actually require judgment and critical thinking. Once AI agents handle the routine volume, Tier 1 analysts stop acting as a clearinghouse and start focusing on exceptions that matter.

What happens to routine alert volume?

Before AI agents, every alert sat in a queue until an analyst got to it. Routine phishing alerts, known false positives, low-risk configuration changes. They all waited their turn. After deployment, AI agents pick up these alerts immediately, run a full investigation, and close the ones that don't require human review.

The scale matters here. ECS, a top-5 MSSP in North America, routes 30,000 alerts per month through Dropzone AI, and at that volume, AI agents handling the routine alerts isn't optional. Without them, the queue buries the team.

Does investigation quality stay consistent across shifts?

Yes, and this is one of the less obvious but more important shifts. Every alert gets the same evidence collection steps, the same decision logic, and the same closure criteria. It doesn't matter whether it's 2 AM on a Saturday or 10 AM on a Tuesday. There's no variability from analyst experience levels, fatigue, or shift handoffs.

Teams report that even during peak periods and alert spikes, performance matches normal operations. Investigation quality stabilizes because it's no longer dependent on who's on shift.

What Happens to SOC KPIs When AI Agents Investigate Alerts?

The improvements show up in the metrics your leadership already tracks. You don't need new dashboards or abstract measures to demonstrate value. The ROI appears in operational and executive reporting that already exists.

Which metrics move first?

Four things tend to shift in the first reporting cycle:

- Backlog size drops. First-pass investigation clears the queue faster than human-only triage.

- SLA adherence improves. Alerts don't sit waiting for an available analyst during high-volume windows.

- Investigation quality stabilizes. Consistent closure criteria across every alert, every shift.

- Analyst utilization shifts. Time moves from routine triage toward higher-value analysis and escalation decisions.

These aren't new metrics. They're the ones your CISO already reports to the board.

How do mean times change across the alert lifecycle?

With AI agents handling the initial investigation, delays caused by queue depth, shift changes, and manual validation compress across the board. Mean time to acknowledge (MTTA) drops because alerts no longer wait in a queue. Mean time to investigate (MTTI) follows, since AI agents complete the investigative work immediately. And mean time to resolve (MTTR) compresses because the entire timeline from alert to closure shortens.

Indiana Farm Bureau saw 5x faster MTTR after deploying AI investigation. That's not a benchmark test. That's production alerts under real SLA pressure.

The data holds up beyond individual case studies too. A controlled Cloud Security Alliance study of 148 participants found that AI-augmented analysts completed investigations 61% faster than those working without AI assistance.

How Do Teams Handle More Alerts Without More Headcount?

This is the section that matters most to budget conversations, and it requires honest framing.

AI agents don't replace analysts. They absorb the volume growth that would otherwise require proportional hiring. When routine investigation is handled autonomously, the team covers more ground without adding headcount, and the people on the team spend their time on work that actually requires their expertise.

What happens during alert spikes, weekends, and holidays?

Before AI agents, alert spikes meant backlog. Weekends meant queues that accumulated until Monday morning. Holidays meant reduced coverage and a pile of uninvestigated alerts waiting for the team to return.

After deployment, AI agents keep investigating around the clock. Queues stay manageable during surges because the agents don't take breaks, don't slow down during off-hours, and don't carry over backlogs that compound into the next business day.

Where does analyst time actually go?

This is the shift that changes how the role feels day-to-day. Analysts spend less time validating obvious false positives and more time on true threat analysis, escalation decisions, and follow-on investigation.

Zapier's security team cut 85% of manual alert investigation after deploying Dropzone AI. That's capacity returned to the team for work that requires human judgment, like deciding whether an alert is part of a broader campaign, tracing lateral movement, or escalating a confirmed threat.

That shift in how analysts spend their time is one of the outcomes teams talk about most.

What Changes Downstream When Every Alert Arrives Pre-Investigated?

The compounding value shows up after Tier 1. When escalated alerts arrive with context, evidence, and preliminary conclusions already assembled, the analyst or responder receiving them starts further ahead.

How does escalation quality change?

Before AI agents, an escalated alert often arrived as a raw signal. The receiving analyst had to re-collect evidence, validate whether the alert mattered, and build context before they could make a decision. That's duplicated work.

After deployment, escalated alerts arrive pre-investigated. The evidence is collected, the context is assembled, and a preliminary conclusion is attached. The analyst's job shifts from "figure out what happened" to "decide what to do about it."

Pipe's security team reported 90% faster escalated investigations after deploying AI agents. The speed didn't come from analysts working faster. It came from cases arriving already worked up, so responders could decide and act instead of revalidating from scratch.

Why Does Deployment Work Without Disrupting Existing Workflows?

Deployment risk is the number-one blocker for most security teams evaluating AI investigation. The concern is reasonable. Nobody wants to rearchitect their SOC to adopt a new tool.

In practice, AI agents integrate into your existing alert ingestion, case management, and escalation paths. They query your SIEM, EDR, identity, and cloud tools via API, the same way a human analyst would. Escalation paths stay intact, and results show up in the dashboards you already use.

No log normalization. No data migration. No playbook creation. The organizations reporting these 11 outcomes didn't redesign their SOC to get them. They plugged AI agents into the workflow they already had.

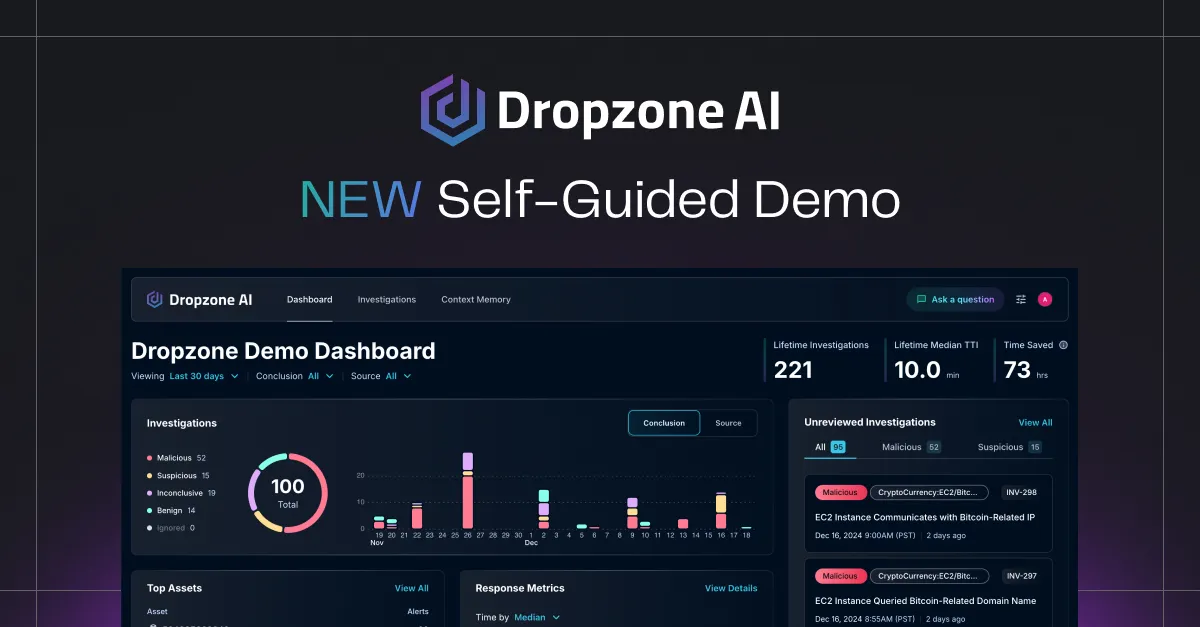

For teams evaluating how AI agents fit into existing SOC workflows, Dropzone AI offers a self-guided demo to see investigations in action.

.png)