You just spent four days walking the RSAC floor. Every third booth said "Agentic SOC." With 50+ agentic SOC vendors competing for your attention, the demos started blurring together by Wednesday.

You're not alone. Constellation Research observed that while security leaders want faster triage, stronger investigations, and less swivel-chair work, "vendor interpretations differ significantly." Some mean AI-assisted workflows. Others mean fully autonomous agents. A few mean SOAR playbooks with a fresh coat of paint.

The gap between interest and adoption tells the story. Most enterprise security teams are experimenting with AI agents, but very few have moved them into live production. The gap isn't ambition or budget. It's trust.

Before RSAC, former Gartner analyst Gorka Sadowski posted a challenge to buyers: "Brace for the AI-washing onslaught. Come armed with questions. No more 'trust us, bro.'"

We took that seriously. These 15 questions come from what buyers asked at our booth, what security leaders posted on LinkedIn, and what prospects consistently raise in discovery calls. We're answering every one of them here, including what we can't do, because we think that's what the market needs right now.

What Does "Agentic SOC" Actually Mean?

An agentic SOC is a security operations model where specialized AI agents handle detection and response tasks autonomously. It isn't a product you buy from one vendor. It's a way of structuring how work gets done in the SOC.

The confusion at RSAC was predictable. Google, CrowdStrike, Arctic Wolf, Splunk, and dozens of startups all used the term. Each meant something different.

Here's a simple way to sort the noise. Vendor offerings fall somewhere on this spectrum:

- AI-assisted: A human drives the investigation. AI suggests next steps or surfaces relevant data.

- Semi-autonomous: AI investigates independently, but a human reviews and approves before action is taken.

- Fully autonomous: AI agents investigate, act, and collaborate with other agents without a human initiating each step.

When you're evaluating vendors, listen for whether they describe a tool you configure or agents that do the work. That distinction changes everything about deployment, maintenance, and long-term value.

How Should You Evaluate Agentic SOC Vendors?

The 15 questions below come from three places: what buyers asked at RSAC, what security practitioners like Gorka Sadowski (security analyst and advisor) and Valeriu-Christian Miron (security engineer) published as evaluation frameworks, and what prospects consistently ask us in discovery calls.

For each question, we'll share what to ask, what good answers sound like, and how Dropzone AI answers it.

Trust and Transparency

1. How can I verify what the AI is doing?

Constellation Research found "little appetite for blind trust" among security leaders at RSAC. They're right. If you can't inspect every step an AI agent takes, you can't audit it, you can't coach it, and you can't explain its decisions to your board.

What good looks like: A full audit trail of every query executed, every reasoning step, and every decision made. Explicit attribution when human coaching influences the output.

Red flag: "Trust us" without inspection capabilities. Any vendor that can't show you exactly what happened during an investigation is asking you to accept a black box.

Dropzone's answer: Glass box transparency. Every tool queried, every finding generated, every reasoning chain is visible and auditable. When a coaching directive influences an investigation, the system attributes it explicitly: "My manager told me to do this, and here's exactly what they said." You don't have to trust us. You can verify us.

2. Does it hallucinate?

This question matters more in security than almost any other domain. A hallucinated finding can trigger unnecessary incident response. A missed finding can leave an attacker in your environment for weeks.

What good looks like: Agents that work through structured analysis against your actual data, not generation from training data. Every finding should be backed by evidence you can inspect.

Red flag: Vague claims about "guardrails" or "responsible AI" without explaining the mechanism. Ask to see the evidence chain behind a specific finding.

Dropzone's answer: Our agents don't hallucinate because they don't generate answers from imagination. They query your tools via API, work through structured analysis (frequency analysis, grouping, filtering), and reason over real log evidence. Every finding includes the evidence rows, the query that produced them, and the reasoning for each filter step. If the system removes 3,800 rows during an investigation, it tells you exactly why.

3. How do you handle prompt injection and data security?

What good looks like: Agents that operate through structured tool calls and defined interfaces, not open-ended prompts processing untrusted input. A clear data residency story where your data stays in your environment.

Dropzone's answer: Our agents operate through structured API calls with pre-trained investigation logic. We've undergone AI-specific penetration testing, including prompt injection testing. Your data isn't migrated, normalized, or stored outside your existing environment. Customer data is never used to train models, and LLM providers are contractually restricted from retaining it. Full details are on our Security & Privacy page.

Real Autonomy vs. Marketing

4. Are there hidden humans behind the AI?

There's a quiet debate in the industry about whether AI SOC products actually need human "safety drivers" reviewing cases behind the scenes. Some vendors staff analysts to handle a percentage of investigations, then market the product as AI-powered.

What good looks like: Ask directly: "Is every investigation fully software-executed, around the clock?" The answer should be unambiguous.

Red flag: Evasive language about "human oversight" or "human-in-the-loop quality assurance." Those phrases can mask a service model hiding inside a software label.

Dropzone's answer: 100% software. No hidden analysts. No outsourced labor. Every investigation runs at the same depth at 3 AM as it does at 3 PM. Zero variance across every shift. If you're buying AI, you should get AI.

5. Is this actually an agent, or a SOAR playbook with better marketing?

SOAR automates predefined playbooks. An agent reasons dynamically: it builds an investigation plan, queries your tools, branches based on what it finds, and adapts as the investigation unfolds. That's a fundamentally different architecture.

What good looks like: Can you change investigation behavior by talking to the system in natural language, or do you need to rewrite a playbook? When your environment changes, does the system adapt through a conversation or require engineering work?

Dropzone's answer: Recursive reasoning, no playbooks, no brittle decision trees. Coachable in natural language with custom investigation strategies and context memory unique to your organization. When your environment changes, you coach the agent in plain English. And it fits directly into your existing SOAR implementation, so you don't rip anything out.

6. How do you measure if an AI SOC solution is mature or just well-marketed?

Valeriu-Christian Miron's ARMM framework gives buyers a vendor-neutral scoring tool. It evaluates 82+ response actions across three axes: Trust, Complexity, and Impact. The key insight is that the same action lands in a different maturity tier depending on your team, your environment, and your risk tolerance. A mature SOC with seasoned engineers can safely automate device isolation. A junior team with no established trust in the AI? Different story entirely.

What good looks like: Verified customer metrics from named organizations, not benchmarks on synthetic data.

Dropzone's answer: 5x faster MTTR (Pipe). 85% reduction in manual alert investigation (Zapier). 300+ production deployments. 160 years of manual analyst work automated.

Integration and Deployment

7. Are you a SIEM? Where do you fit in my stack?

This was one of the most common questions at our booth, and it makes sense. With 50+ vendors using "Agentic SOC," the category boundaries are blurry.

Dropzone's answer: We're not a SIEM. We don't produce alerts. We're not a detection tool. AI agents query your existing tools (SIEM, EDR, cloud, identity, email) via API, the same way your human analysts do. Ninety-plus native integrations. Fits into your existing SOAR. And most customers rarely even open the Dropzone dashboard. Investigation outputs go directly into Jira, ServiceNow, or Splunk. It's another member of the team, not another dashboard.

8. How long does deployment actually take?

What good looks like: Hours, not weeks. No data migration. No log normalization. No professional services engagement required to get started.

Dropzone's answer: Most customers are investigating within hours of starting setup. You connect via API, the agents are pre-trained on your SIEM schema out of the box, and you coach them to your environment in natural language from there. No playbooks to build. No custom development.

9. What happens to my current team?

Every vendor gets this question. Many answer it poorly by positioning AI as a headcount reducer. That's the wrong framing.

What good looks like: Does the vendor talk about elevating your team or replacing them? The answer reveals whether they've actually worked with SOC teams or are selling to finance.

Dropzone's answer: Your analysts don't lose their jobs. They lose the work they hate. Tier 1 triage, manual log correlation, repetitive investigation. Your L1s operate at L2 and L3 levels. Your hunters focus on hypothesis development and strategic response. Your SOC manager directs the AI: what to investigate, what to hunt, what to contain. Your team provides the strategy and oversight. AI agents execute at machine scale.

How It Actually Works

10. How are you actually doing this?

What good looks like: Can the vendor explain the architecture without hand-waving? Are they using pre-trained agents specialized for security, or asking you to build your own on top of a general framework?

Dropzone's answer: AI agents combine LLM reasoning with structured analysis tools (frequency analysis, grouping, filtering) and a Cyber Reasoning Core that encodes deep security domain knowledge plus your company context. Agents are pre-trained on 90+ security tools out of the box. This isn't a "build your own agent" framework like LangChain or CrewAI. You're deploying agents that already know the job.

11. What metrics prove SOC efficiency improvements?

This was Gorka Sadowski's #1 question for AI SOC vendors at RSAC, and for good reason. Claims without verified data aren't claims. They're marketing.

What good looks like: Customer-sourced, verified metrics with named references. Not benchmarks on synthetic data or self-reported improvements.

Dropzone's answer:

- 5x faster MTTR (Indiana Farm Bureau / Pipe case study)

- 85% reduction in manual alert investigation (Zapier / Assala Energy)

- Investigations completed in ~10 minutes per alert

- 160 years of manual work automated across 300+ production deployments

- 10-20 hours of threat hunting compressed to ~1 hour (AI Threat Hunter, shipping Summer 2026)

Gartner Cool Vendor for the Modern SOC. Named sample vendor in the 2025 Hype Cycle for Security Operations.

12. How does answer quality hold up as models change?

What good looks like: Structured investigation workflows that are less sensitive to underlying model changes. Continuous environment learning. Clear attribution so you can trace why a decision was made.

Dropzone's answer: Two things. First, our agents continuously learn your environment through investigations, feedback, and context memory that builds over time. When your environment changes, you coach the agent in plain English. Second, our Cyber Reasoning Core encodes deep security domain knowledge separately from the underlying models. Agents follow structured analysis workflows against real data, which makes output quality less sensitive to model changes than pure generation systems. And everything is attributed: if a coaching directive influenced a decision, you'll see exactly which one and why.

What Sets You Apart?

13. How do you stand out from the other 50+ vendors?

Here's an easy way to test any vendor. We used these five questions at RSAC, and they cut through the noise fast:

- Do your agents actually work together, or is it one agent doing one thing?

- Can you see every step of every investigation?

- Can you coach it in plain English?

- Does it hunt for threats proactively, or does it only respond to alerts?

- Does it work inside your existing tools, or does it add another dashboard?

Dropzone's answer: Yes to all five. AI SOC Analyst, AI Threat Hunter, and AI Threat Intel Analyst collaborate autonomously: intelligence triggers hunting, hunting triggers investigation, no human connecting the steps. Every investigation is a glass box. Agents are coachable in natural language. AI Threat Hunter runs proactive, hypothesis-driven hunts (shipping Summer 2026). And outputs go to Jira, ServiceNow, Splunk, wherever your team already works.

14. What can't you do?

CISOs are used to vendors overselling. Honesty stands out.

Dropzone's honest list:

- We're not a TIP replacement. We work alongside your existing threat intelligence platform and feeds. The goal is to turn intelligence into action faster, not replace the tools you rely on.

- We're not an IOC scanner. We do hypothesis-driven investigation. If your primary need is indicator lookups, that's not our strength.

- AI Threat Hunter and AI Threat Intel Analyst ship Summer 2026. They're not GA today. AI SOC Analyst is live now at 300+ organizations, and early access for the new agents is open.

- We're not MDR. We're 100% software. If you want a managed service where someone else owns the process, that's a different model.

Each of those "nots" actually tells you something about what we are. That's deliberate.

15. Why should I start now instead of waiting?

Every AI agent deployment compounds over time. Context memory builds. Investigation strategies refine. Coaching makes the system more precise the longer it's deployed. And as new agents ship, they inherit the context that existing agents have already built.

Organizations building their AI agent team now aren't just solving today's problems. They're creating a compounding advantage that grows with every investigation, every hunt, and every agent that joins the team.

How to Use This Guide

Take these 15 questions to every vendor conversation. Compare answers side-by-side. Look for specificity over generality. If a vendor can't name customers, show you an audit trail, or tell you what they can't do, that's your signal.

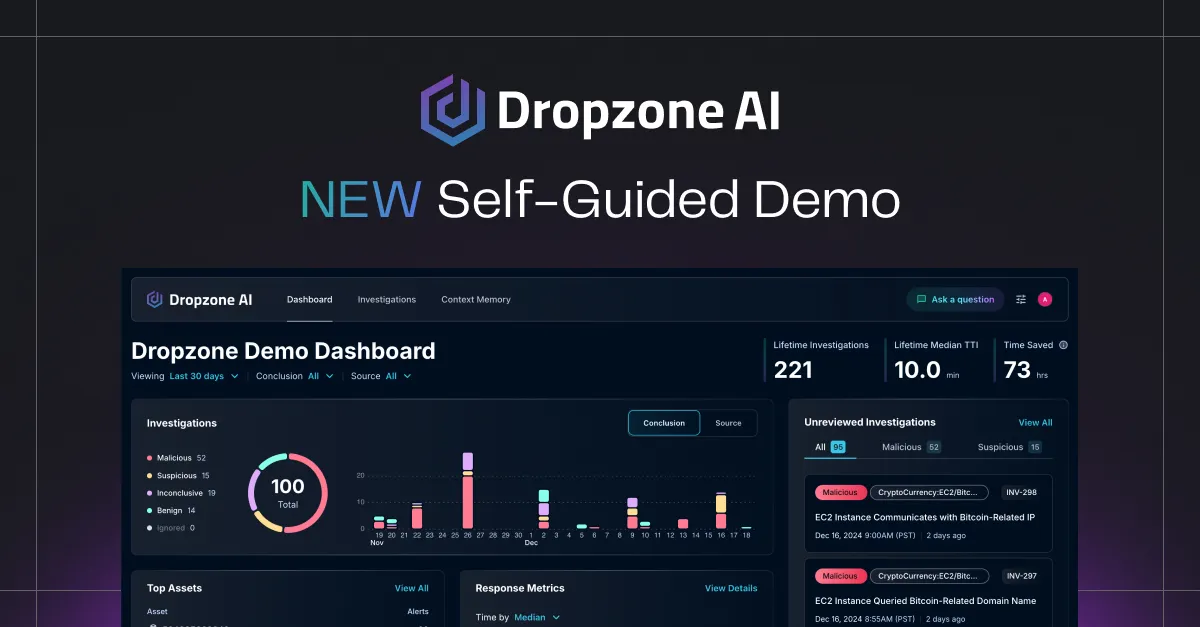

We answered every one of these publicly because we believe transparency is the best differentiator in a market this noisy. If you want to go deeper, we've written a detailed evaluation framework for AI SOC analysts and offer a self-guided demo so you can see the glass box for yourself.

.png)