The Rise of Agentic AI in Cybersecurity: What SOC Teams Need to Know in 2026

Threat actors are already using AI to generate convincing phishing at scale, adapt malware to evade detection, and compress the time between initial access and impact. That side of the AI arms race is well underway.

The defenders' side? Most security operations center (SOC) teams are still running Security Information and Event Management (SIEM) correlation rules, Security Orchestration, Automation, and Response (SOAR) playbooks, and human analysts who can only get through so many alerts per shift. The tooling wasn't built for what's coming at them now.

Agentic AI in cybersecurity changes that equation. Instead of tools that detect and then wait for a human to investigate, agentic AI systems pick up alerts on their own, work through the evidence across your entire stack, and act on what they find without a human directing each step.

This isn't a future scenario. Organizations are running agentic AI in production SOCs today. Here's what's driving the shift, what it looks like on the ground, and what early deployments are showing.

Why is Agentic AI on the Rise in Cybersecurity?

The short answer: SOC teams are caught between faster attacks, more alerts, and automation that can't keep up. All three pressures hit at once.

Threat actors adopted AI before defenders did.

They didn't wait. AI-generated phishing campaigns that used to take weeks to build now launch in hours. Malware mutates faster than signature-based tools can track. The gap between initial access and full breach is shrinking with every generation of attack tooling. SOC teams running human-speed investigation workflows are falling further behind.

Alert volume has outpaced human investigation capacity.

The numbers tell the story:

- 40% of security alerts are never investigated (SACR 2025 AI SOC Market Landscape), not from lack of effort, but because volume has outpaced capacity

- 73% of security teams cite excessive false positives as their top detection challenge (SANS 2025 Detection and Response Survey), meaning analysts spend more time ruling things out than acting on real threats

- Enterprise SIEMs cover only 21% of MITRE ATT&CK techniques despite having the telemetry for 90% or more (CardinalOps 2025 research via Help Net Security), a gap that's structural, not a configuration problem

You can't hire your way out of a problem that scales faster than your team.

Earlier automation reached its ceiling.

SOAR was supposed to solve this. It helped, but not enough. SOAR needs a playbook for every scenario, and threat actors don't follow playbooks. When an attacker uses a novel technique that no existing workflow anticipated, SOAR can't adapt. Maintaining thousands of playbooks has become a full-time job in itself, and the rule-based model can't reason through situations it hasn't seen before.

Agentic AI addresses all three:

- Investigates alerts the moment they fire

- Reasons through unfamiliar situations without pre-written playbooks

- Operates continuously at machine speed

How is Agentic AI Different from Traditional AI and Automation?

"Agentic AI" is everywhere right now. If you're trying to figure out what actually changes for your SOC, here's where the lines fall.

Three distinctions matter most if you're running a SOC:

Agentic vs. generative AI. Generative AI gives you a summary, a draft response, maybe a detection rule suggestion. Agentic AI doesn't stop at text. It queries your SIEM, pulls threat intel, correlates what it finds across tools, and hands you a finished investigation. The difference is output vs. action.

Agentic vs. automation. SOAR runs what you told it to run. Agentic AI figures out what to do next on its own. It applies security domain knowledge, queries the relevant tools, and builds an evidence chain even when no playbook exists for what it's seeing.

Agentic vs. human-in-the-loop AI. Some AI security products still route every case through a human reviewer before closing it out. That means your coverage scales with analyst availability, not machine capacity. If your team is in a single time zone or short-staffed on weekends, you have gaps. Agentic AI removes that constraint.

How Do AI Agents Work Inside a SOC?

Here's what it looks like day to day. An alert fires. An AI agent picks it up, queries the tools already in your stack (SIEM, endpoint detection and response (EDR), cloud, identity, threat intel), builds an evidence set, checks findings against known threat actor techniques, and tells you what it found and why. You can see every tool it queried, every data point it examined, and every step in its reasoning. Nothing is hidden.

How do you know if an AI agent's findings are trustworthy?

That transparency matters. If you can't see how an AI agent reached its conclusion, you can't trust it. If you can't trust it, you'll re-investigate everything it touches, and you're back to square one. The whole point is that when an agent says "this is a false positive," you can verify that in thirty seconds instead of spending twenty minutes reaching the same conclusion yourself.

What happens when multiple AI agents collaborate?

Where it gets interesting is when agents work together. A single agent investigating alerts is useful. A team of agents that share context and task each other is a different thing entirely. An alert investigation agent surfaces a suspicious lateral movement pattern. It hands that finding to a threat hunting agent, which pursues the hypothesis across the full environment. Without agent collaboration, that kind of hand-off requires an analyst to notice the pattern, context-switch, and start a separate investigation manually.

For analysts, the day-to-day changes. What that looks like in practice is covered in the next two sections.

How Does Agentic AI Reduce Alert Fatigue?

Every SOC analyst knows the feeling. You come in Monday morning to a queue that's grown by thousands over the weekend. You start triaging, but for every alert you close, three more come in. Low-priority alerts pile up untouched. Eventually, you stop looking at some categories entirely because there's no time.

That's alert fatigue, and it's not a discipline problem. There are simply more alerts than any team can investigate manually.

AI agents break that cycle. They pick up every alert the moment it fires, run a full investigation, pull context from across the stack, and deliver a verdict. False positives get closed with a full evidence trail, not just a severity label. Real threats show up in front of analysts already investigated and ready for a decision.

The difference for analysts isn't just a shorter queue. It's knowing that nothing slipped through while you were working something else. Every alert that reaches you has already been through a complete investigation.

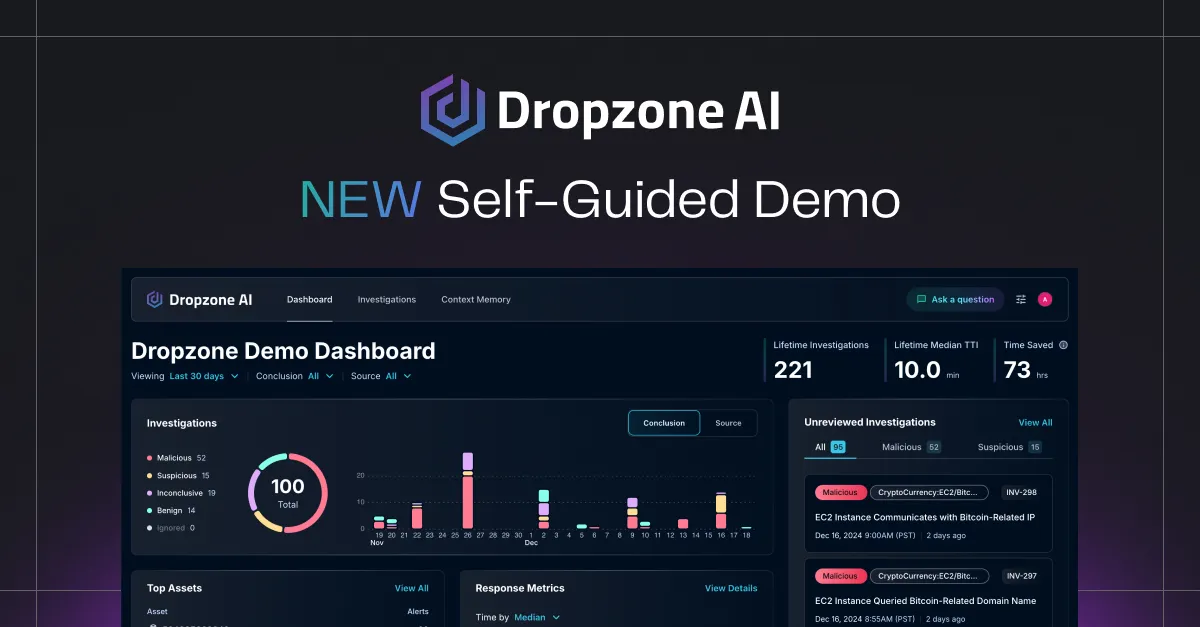

Zapier's security team saw an 85% reduction in manual alert investigation after deploying Dropzone AI. That's not a pilot metric. That's production, with real alerts, at real volume.

Will Agentic AI Replace SOC Analysts?

No. Agentic AI augments analysts. It doesn't replace them.

What changes is the nature of the work. Alert triage, evidence collection, and routine threat hunting are largely automated. What remains is the strategic layer: forming threat hypotheses, directing AI agents, interpreting complex threat actor behavior, and making decisions that need organizational context and judgment.

What expands is analyst impact. The constraint shifts from "how many alerts can I get through this shift" to "what am I doing with the findings my AI agents surface." Analysts who learn to direct AI agents and apply their findings to broader strategy become more effective, not less relevant.

What Are Organizations Seeing from Agentic AI Deployments?

Numbers from production, not projections:

- 5x faster Mean Time to Respond (MTTR), from detection to containment

- 85% reduction in manual alert investigation, with analysts redirected to strategic work

- 90% faster escalated investigations, because cases arrive at analysts already worked up

Think about what 30,000 alerts per month looks like. That's the volume ECS, a top-5 MSSP in North America, routes through Dropzone AI. No team is triaging that manually. At enterprise scale, agentic AI isn't supplementing human investigation. It's the only way to get full coverage.

The analyst community is paying attention too. Gartner named Dropzone AI a Cool Vendor for the Modern SOC and included it as a sample vendor in the 2025 Hype Cycle for Security Operations. When Gartner starts tracking a category, it's usually past the "is this real?" phase.

Key Takeaways

- Agentic AI is rising in cybersecurity because threat actors adopted AI first, alert volumes exceeded human investigation capacity, and earlier automation proved too brittle for novel threats.

- Agentic AI doesn't just detect or summarize. It investigates, decides, and acts. That's a different category from automation (which runs scripts), generative AI (which produces content), and human-in-the-loop AI (which creates bottlenecks).

- A team of collaborating AI agents is more than an efficiency tool. Agents share context, task each other, and compound capability in ways no single tool can match.

- Alert fatigue is a structural problem that needs a structural fix: every alert investigated continuously, 24/7, without backlog or shift-based coverage gaps.

- Agentic AI doesn't replace analysts. It automates the volume work so analysts spend their time on the problems that need human judgment.

- Early production deployments show dramatically faster response times, reduced manual investigation workloads, and accelerated escalations across organizations of all sizes.

.png)