Introduction: The Asymmetry Problem

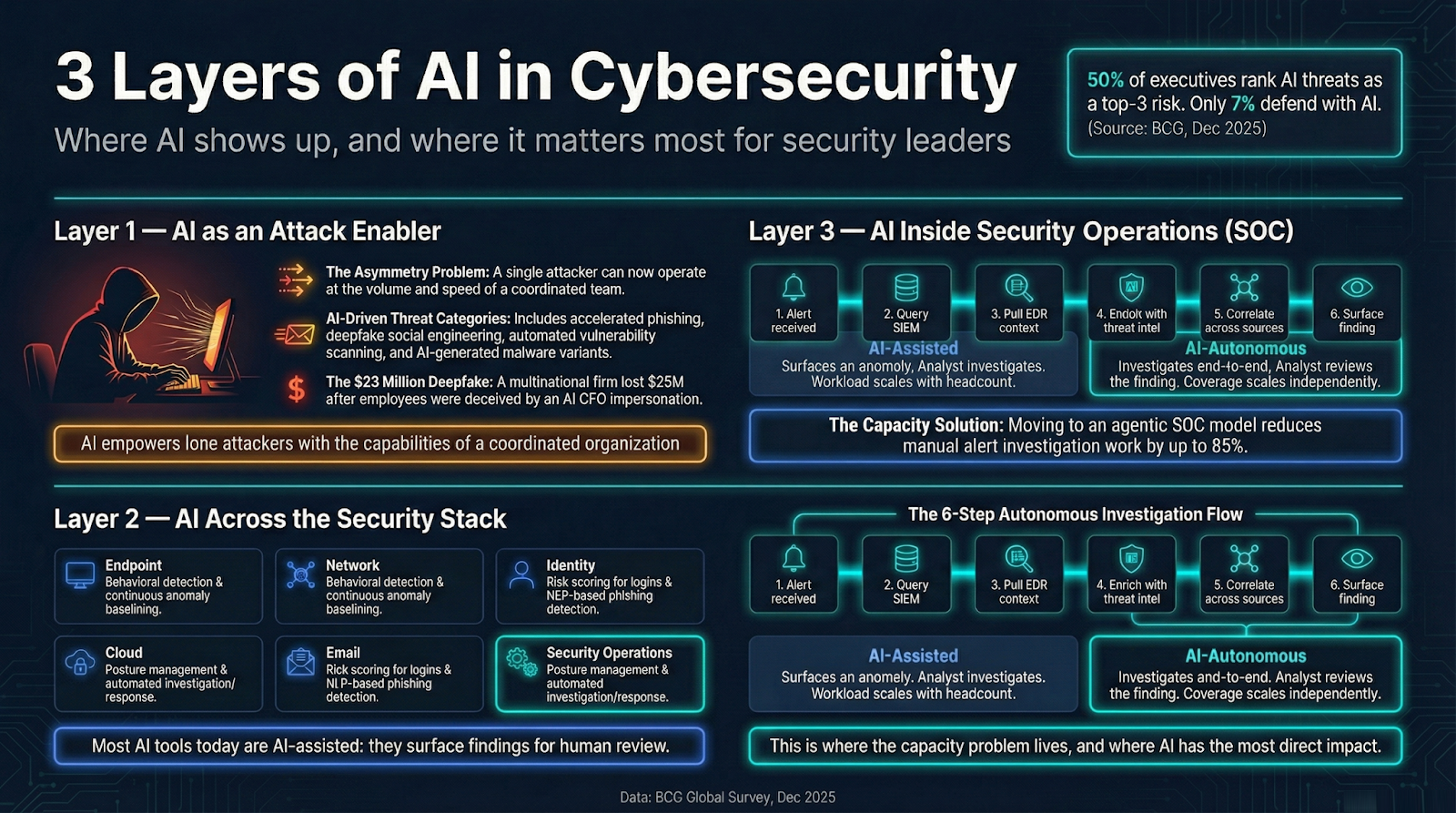

Half of executives now rank AI-enabled threats as a top-three organizational risk. Yet only 7% of organizations are defending with AI tools, according to a December 2025 BCG global survey of 500 senior leaders.

That gap is not a technology availability problem. The tools exist. The problem is understanding: most security leaders haven't had a clear map of what AI in cybersecurity actually means, where it shows up, and where it matters most for their specific decisions.

This primer covers the landscape of AI in cybersecurity across three layers every security leader needs to understand before making AI investment decisions. Layer one is the threat side: how attackers are using AI against you. Layer two is the stack: where AI shows up across your existing security controls. Layer three is operations: where the capacity problem lives and where AI has the most direct impact on what your team can actually do at scale.

How Is AI Being Used to Attack Organizations?

AI is not a theoretical future threat. Attackers are using it operationally today, and the impact is qualitative, not just quantitative. The attacks themselves are getting smarter, faster, and harder to catch with defenses designed for an earlier era.

Four categories define the current threat picture:

- AI-accelerated phishing at scale. What once required manual research to craft a credible spear phishing lure now takes seconds. AI generates personalized variants at volume, learns which lures generate clicks, and iterates faster than any human campaign could.

- Deepfake social engineering. Voice cloning, synthetic video, and AI-generated text have made identity-based fraud viable at scale. Executives are impersonated on live video calls. A multinational engineering firm lost $25 million after employees were deceived by an AI-generated deepfake video impersonating the CFO, per the BCG research.

- Automated vulnerability scanning. AI-enabled scanners probe targets continuously, testing attack surfaces at a speed no human attacker team can match. The attack surface doesn't sleep; neither does the tooling.

- AI-generated malware variants. Attackers use AI to generate new malware variants that evade signature-based detection. The volume and novelty of variants makes static defenses less effective over time.

What connects all four: a single attacker can now operate at the volume of a coordinated team. The math changed.

How Do AI-Enabled Attacks Differ from Traditional Attacks?

Speed of personalization and iteration. That's the short answer.

Traditional phishing required manual effort: research the target, craft the lure, send, wait. AI compresses that cycle to near-zero. Thousands of personalized variants can be generated simultaneously, each tailored to a specific target's role, company, and context. There's no longer a meaningful bottleneck between "deciding to attack" and "sending a credible attack."

The same logic applies to vulnerability scanning. AI-enabled tools probe, test, and adapt based on what they find. An attacker's toolkit can now learn from each probe and adjust its approach in near real-time.

Two implications follow for defenders. First, static defenses designed for known patterns fail against attacks that adapt. Second, attribution becomes harder: AI-generated content is more difficult to fingerprint because it doesn't carry the stylistic consistency of human-authored attacks.

The response required isn't just "better detection of the same attacks." It's detection and response that operates at a comparable speed.

Where Does AI Show Up Across the Security Stack?

AI isn't a single product category. It appears at every layer of the security stack, and most organizations already have some form of AI-assisted tooling in place without necessarily recognizing it as such.

Here's how it maps across the major stack layers:

- Endpoint (behavioral detection). Endpoint detection and response (EDR) tools have moved well beyond signature matching. Modern EDR uses behavioral models to detect process injection, lateral movement, and other attack techniques based on what processes do, not what they're called.

- Network (anomaly detection). Network detection and response (NDR) tools build baseline models of normal traffic and flag deviations. AI enables continuous baselining at a scale that rules-based systems can't sustain.

- Identity (risk scoring). Identity and access management platforms use AI to calculate risk scores based on login behavior, device posture, and contextual signals. Step-up authentication triggers when the score crosses a threshold.

- Cloud (posture management). Cloud security posture management (CSPM) tools use AI to identify misconfigurations and policy violations across cloud environments where the number of resources is too high for manual review.

- Email (phishing detection). Email security platforms use natural language models to score messages for phishing indicators, including contextual signals that traditional keyword filtering misses.

- Security operations (investigation and response). This is where the most significant operational change is happening, and where the rest of this article focuses.

The common thread across all these categories is that AI is doing work that used to require human judgment on every event: classifying, scoring, prioritizing. The difference between categories is what happens next.

What Is the Difference Between AI-Assisted and AI-Autonomous Security Tools?

AI-assisted tools recommend and surface findings: a human approves or acts on every output. AI-autonomous tools execute actions and escalate findings without requiring human review on each step. Both are legitimate approaches, but they have different implications for what your team can do at scale.

In practice, this is the spectrum:

- AI-assisted: The AI surfaces an anomaly. An analyst investigates. The AI suggests a query. The analyst runs it. The AI flags a risky login. An analyst decides whether to block the session. Useful, but the workload scales with analyst headcount. More alerts mean more analyst time.

- AI-autonomous: The AI investigates the anomaly end-to-end, correlates evidence across data sources, and surfaces a conclusion. The analyst reviews the finding, not every individual step that produced it. The workload doesn't scale with alert volume the same way.

The operational implication: AI-assisted tools improve analyst throughput. AI-autonomous tools change what coverage is possible at a given headcount. That's a different value proposition, and it matters most in security operations, which is where alert volumes are highest and the coverage gap is widest.

How Is AI Transforming Security Operations?

The security operations center (SOC) is where the asymmetry between attack volume and defender capacity is most acute.

Alert volumes have grown beyond what human triage can process. That's not a critique of security teams. It's arithmetic. A well-staffed SOC might process a few hundred alerts per analyst per day, depending on complexity. Modern environments generate thousands. The alerts that don't get touched represent real risk: missed threats, late detection, extended dwell time.

AI changes that math. Under the agentic SOC model, AI agents handle front-line investigation work around the clock, without a queue. Every alert gets investigated, regardless of volume or time of day. Analysts focus on what requires human judgment: directing AI agents, interpreting adversary behavior, advancing the security program.

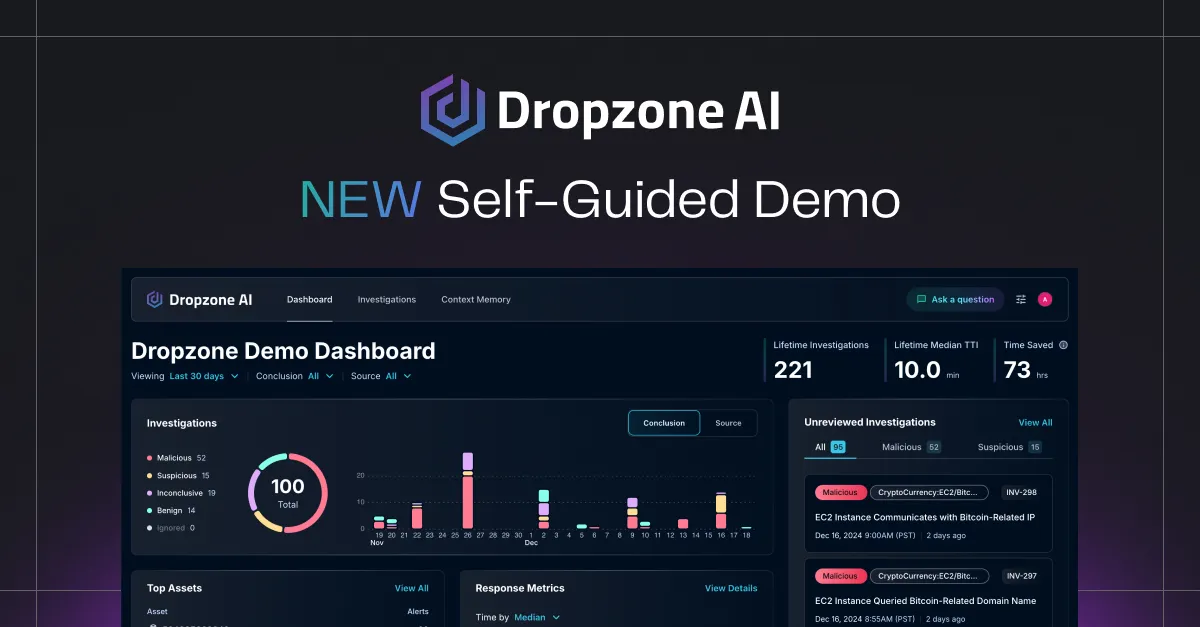

The proof points from production deployments illustrate the gap between theoretical and actual impact. Organizations using Dropzone AI's AI agents have seen an 85% reduction in manual alert investigation work (Zapier case study) and 5x faster mean time to respond, or MTTR (Indiana Farm Bureau and Pipe case studies). Dropzone AI now has 300+ deployments, holds Gartner recognition as a Cool Vendor for the Modern SOC, and is named as a sample vendor in the 2025 Hype Cycle for Security Operations.

Those are not projections. They are production outcomes from real deployments.

What Does AI Actually Do When an Alert Fires?

An AI agent receives the alert and begins the same investigation sequence a trained analyst would run: without waiting in a queue, without fatigue, and without the interruption cost of context-switching.

Here is what that looks like step by step:

- Alert received. The AI agent picks it up immediately, regardless of time, workload, or analyst availability.

- Query the SIEM. The agent pulls historical activity associated with the same user, host, or IP address from the security information and event management (SIEM) platform. Context from the last 30, 60, or 90 days surfaces immediately.

- Pull EDR context. The agent retrieves endpoint behavior data: process tree, file activity, parent-child process relationships. This is the "what did the machine actually do" layer.

- Enrich with threat intelligence. Indicators from the alert are matched against known threat data: infrastructure, malware families, actor TTPs from MITRE ATT&CK.

- Correlate across data sources. The agent builds the full evidence picture: does this user's behavior connect to other anomalies in the environment? Is the same infrastructure appearing in multiple alerts?

- Surface a finding. The agent produces a complete investigation: what happened, what evidence was gathered, what the conclusion is, and whether escalation is warranted.

No analyst had to touch it. Every step is logged. If escalation is warranted, the analyst receives a complete investigation, not a raw alert and a blank screen.

This is the operational difference between AI that assists and AI that investigates.

How Does AI in the SOC Differ from AI at the Perimeter?

Perimeter AI detects and blocks. SOC AI investigates and concludes. These are different jobs with different performance standards.

Endpoint, network, and email security tools use AI for classification: is this input malicious or benign? The performance metric is accuracy. A good classifier catches the bad traffic and passes the good traffic. Classification is fast, stateless, and doesn't require understanding what happened before or after the event.

SOC AI has a different job. When a SIEM fires an alert, the question isn't "is this bad?" The question is "what happened, who was involved, is this a real threat, and what should we do next?" That requires reasoning across multiple data sources, understanding temporal context, and producing a conclusion with supporting evidence.

This is why general-purpose AI tools don't translate directly into SOC performance. Large language models are good at language tasks. SOC investigation requires security domain knowledge, tool integrations, and the ability to correlate evidence across SIEM, EDR, threat intel, and cloud telemetry. The job is different, and the tooling needs to match the job.

What Should Security Leaders Look for When Evaluating AI Security Tools?

Every security vendor now claims AI. "AI-powered" appears in pitch decks, product sheets, and press releases across the entire market, from legacy SIEM vendors that added a machine learning module to pure-play AI-native platforms. The signal-to-noise ratio is low.

For CISOs doing real evaluation, the questions that separate capability from marketing come down to a few specifics: what the AI actually does without a human in the loop, what evidence exists from production deployments, and how much configuration is required before it works.

Before signing anything, get direct answers to these questions:

- What does the AI do without a human reviewing each action? If the vendor describes a workflow where AI flags something and an analyst investigates, that is AI-assisted. Useful, but not AI-autonomous. Know which one you're buying.

- Can you share customer results with real numbers, not projections? MTTR improvement, investigation volume, analyst time saved. Projections and demos are not evidence. Deployed customers with real numbers are.

- What is the onboarding timeline? Weeks of tuning and playbook configuration is a signal that the system depends on pre-programmed rules, not AI reasoning. AI-native platforms that understand your environment from context don't require months of customization before they're useful. Platforms that onboard in hours and adapt through natural language coaching are architecturally different from those that require data normalization and rule libraries before they function.

- Which integrations are native versus requiring custom work? Native integrations with the major SIEM, EDR, and cloud platforms are table stakes. If the integration story is "we have an API, your team can build it," that is configuration overhead you'll carry indefinitely.

- What does the escalation path look like? How the AI hands off to humans tells you more about the architecture than any slide. Does the analyst receive a complete investigation or a raw alert? Is the AI's reasoning visible and auditable?

How Can CISOs Identify AI-Washing in Vendor Claims?

Ask one question: what does your AI do without a human approving each step?

Vendors who can't answer clearly are describing AI-assisted tools as AI-autonomous. They may not be doing this deliberately: many vendors genuinely believe that "AI surfaces the alert" and "human investigates" constitutes AI-driven security. It doesn't, not in the sense that changes coverage at scale.

The follow-up question is equally telling: can you provide production case studies with quantified outcomes? MTTR improvement, investigation volume, analyst time saved. If the answer is case studies from the last 90 days with one customer and one metric, that's a pilot, not a product. Real deployments at scale, with consistent outcomes across multiple customers, tell you something about the underlying architecture.

A checklist for the evaluation conversation:

- Ask about autonomous actions specifically. "What does the platform do at 2am when no analyst is watching?" is a revealing question.

- Ask about onboarding time. Months means playbooks. Days means AI reasoning.

- Ask about native integrations. Which SIEM, EDR, and cloud platforms are built in, not bolted on?

- Ask for the escalation workflow. What does the analyst actually receive when the AI escalates?

- Ask for production references, not prospects. Talking to a customer who's been deployed for six months tells you what the first pitch didn't.

Key Takeaways

The landscape of AI in cybersecurity is not a single category. It spans three distinct layers, and the decisions they require of security leaders are different at each level.

- Layer 1: AI as an attack enabler. Attackers are using AI to personalize threats at scale, automate vulnerability discovery, and generate malware variants that evade static defenses. The attack surface is now adaptive.

- Layer 2: AI across the security stack. AI shows up at every layer: endpoint, network, identity, cloud, email, and operations. Most tools marketed as "AI" are AI-assisted: they surface findings and recommendations for human review.

- Layer 3: AI inside security operations. This is where the capacity problem is most acute and where the distinction between AI-assisted and AI-autonomous tools has the most direct impact on outcomes. AI-autonomous tools change what coverage is possible at a given headcount. AI-assisted tools improve how fast analysts work through the same queue.

- Adopting the agentic SOC model changes the unit economics of detection and response. AI agents investigate alerts end-to-end, freeing analysts to focus on strategy, adversary behavior, and advancing the security program. The result isn't fewer analysts: it's analysts doing higher-value work.

- When evaluating AI security tools, start with one question: what does the AI do without a human in the loop? The answer tells you more than any feature list.

Ready to see what AI-autonomous investigation looks like in production? Request a demo or explore how Dropzone AI's 90+ native integrations connect to your existing stack from day one.

.png)