Introduction

AI SOC analysts demonstrate real value when they operate under genuine alert volume and day-to-day operational pressure, but there are some strategies that can accelerate success. These lessons reflect what Dropzone has observed across more than 300 production deployments, in which AI SOC analysts operate continuously under real alert volume rather than in staged pilots. Rather than focusing on models or features, this article concentrates on the practical factors that determine success: combining the best of SOAR and AI SOC agents, onboarding in deliberate phases, and earning analyst trust through real improvements to how the SOC operates.

Why Use Both SOAR and AI SOC Agents?

Use the Right Tool for the Job

Real environments are often noisy. It’s difficult to tune detections such that you don’t miss real signals, but also not overload the SOC with false positives.

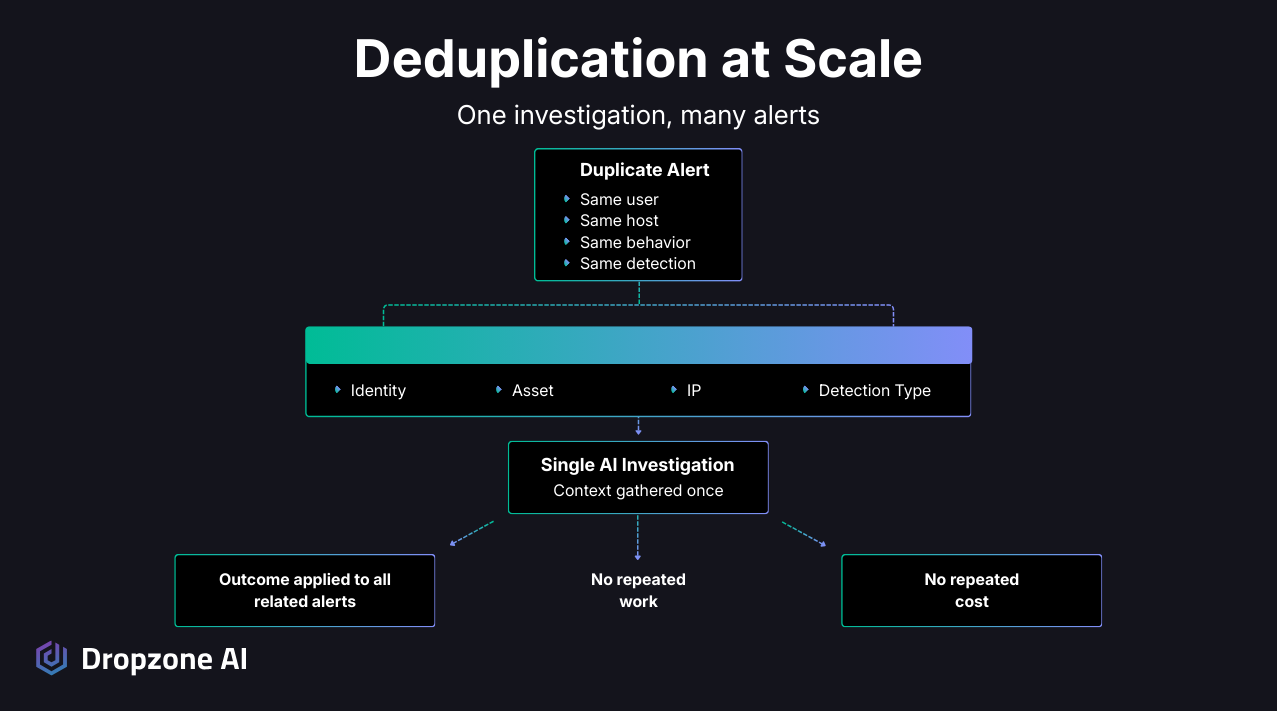

AI SOC analysts can handle high volumes and work multiple alert investigations in parallel, but you still should use alert deduplication and grouping strategies. Dropzone’s AI SOC analyst can also handle deduplication. And, if you have SOAR in place with playbooks to auto-close certain types of deterministic investigations, then you should still use those.

The strategy: Reduce alert volume early to create breathing room and focus the AI on alerts that actually require reasoning.

Suppression, grouping, and deduplication do much of the heavy lifting before AI ever enters the picture. Once repeated alerts tied to the same entities, behaviors, or conditions are consolidated:

- Investigations move faster

- Humans don’t need to spend time weeding out false positives

- Cleaner inputs improve every downstream decision

Why Is SOAR Still Valuable?

Not every alert warrants deep investigation; many detections are deterministic and resolve the same way every time. These are often better handled through existing automation or SOAR, where costs stay flat, and outcomes are predictable. Reserving AI SOC analysts for alerts that require context, correlation, and judgment is efficient and a good use of the right tool for the right job.

Successful deployments tend to expand AI coverage gradually:

- Start with investigation-heavy alerts

- Observe outcomes and validate decision quality

- Widen scope only when value is demonstrated

- Grow coverage based on delivered value, not total alert volume

This pacing approach:

- Keeps spending under control

- Builds confidence in system decisions

- Prevents cost surprises from volume spikes

How Do You Design for Scale and Governance?

Why Is Alert Deduplication Important?

At scale, duplicate alerts are more than an annoyance, they quietly consume analyst time and investigation capacity. When identical alerts tied to the same entities or behaviors are grouped and investigated once, the impact is immediate.

Deduplication can be done either in the SIEM or in the AI SOC analyst system.

Benefits of deduplication at scale:

This becomes especially noticeable during scans, testing activity, or short bursts of repetitive behavior. Without deduplication, those events can flood a SOC with near-identical work. With it, teams see a single investigation that represents the whole pattern.

How Are Human-in-the-Loop and Human-on-the-Loop Different?

There are two similar terms regarding how organizations infuse human judgement and direction into their AI SOC deployment.

- Human-in-the-Loop (HITL) means that a human reviews every investigation or response action. This strategy is often used for response actions that might disrupt business, such as quarantining a device or disabling a user. MSSPs may also use this strategy to review every AI-driven investigation that is escalated to a client.

- Human-on-the-Loop (HOTL) means that a human is able to intervene if needed and there are robust oversight mechanisms, but that the AI SOC agents operate autonomously for the most part. This strategy is often used by small and resource-constrained teams that have spend some time coaching or aligning their AI SOC analyst deployment.

When organizations use AI SOC analysts as first-pass investigators rather than as the final authority (HITL), there are three benefits:

- Context gathering: AI assembles data from multiple sources

- Reasoned conclusions: AI applies investigation logic at scale

- Human accountability: Final decisions stay with analysts

This balance gives teams confidence and helps to allay any concerns about AI accuracy and reliability.

Why override paths matter:

Clear override paths are critical for adoption. When analysts know they can challenge or adjust AI conclusions at any time:

- Resistance fades quickly

- Analysts see AI as a head start, not a replacement

- Accountability stays where it belongs, with human experts

The system handles the heavy lifting while analysts stay accountable for the decisions that matter most.

On the other hand, human-on-the-loop strategies offer the most advantages for resource-constrained teams that spend effort aligning their AI SOC analyst with their unique environment and policies. This tuning effort results in high accuracy and gives the human teams confidence that they can trust the AI SOC analyst to operate in a fully autonomous manner.

Why Do Onboarding and Adoption Matter More Than Models?

Why Does a Measured Ramp-Up Lead to Better Outcomes?

From a technical standpoint, early success comes from constraining scope rather than maximizing coverage.

Phase 1: Initial Rollout

Target a well-defined alert class with:

- Consistent structure

- Known investigative paths

- Limited variables

This allows teams to validate:

- How context is gathered

- How correlations are formed

- How conclusions are produced

Analysts can examine reasoning chains, data sources, and decision confidence before the system assumes broader responsibility.

Phase 2: Controlled Expansion

As more alert types are added, teams tune investigation logic per environment:

- Run alongside human Tier 1 investigation to target mismatches

- Add environment and user behavior to context memory

- Build exception patterns

- Add mandatory checks for specific detections

The result: A system that scales horizontally across alerts without degrading decision quality.

QA Efficiency During Onboarding:

Several teams reduce quality assurance overhead by comparing AI conclusions with analyst decisions for a period of time. This focuses reviews only on mismatches. Instead of sampling every investigation, effort is spent where conclusions differ, and senior analysts add context memory and custom strategies to improve accuracy.

How Does Analyst Experience Shape Success?

Technically, the biggest shift analysts notice is where computation and correlation happen.

Tasks moving from human to automated workflows:

- Cross-tool searches

- IOC enrichment

- Timeline construction

- Reading through files, process trees, emails, etc.

- Entity linking

What this means for analysts:

Instead of reconstructing basic context, analysts review structured findings that already reflect:

- Multiple data sources

- Inferred relationships

- Correlation logic

New analyst focus areas:

- Validating AI-driven escalations

- Adding context memory and custom strategies to improve accuracy

- Communicating outcomes to stakeholders

Why this enables scale:

In practice, this allows the same analyst team to support more volume or more environments without increasing headcount or burning people out.

As Chris DeBrunner, VP of Security Operations at CBTS, described it:

"We've seen a huge reduction in repetitive work. Dropzone has offloaded about 30–50% of our alert volume. That's time we can now spend threat hunting, onboarding customers, or improving coverage."

Key Takeaways

- Noise reduction complements AI investigation: Suppression, grouping, and deduplication before AI deployment uses the right tool for the job.

- Selective AI coverage adds to existing automation: Use AI to shield human analysts from investigation-heavy alerts while routing deterministic alerts through SOAR automation when those playbooks exist.

- Human-in-the-loop and human-on-the-loop drive adoption: Position AI as first-pass investigator with explicit analyst override paths to build trust and overcome resistance.

- Phased onboarding determines success: Start with constrained alert classes, validate decision quality, then expand based on demonstrated value.

Conclusion

Across large-scale, long-running deployments, AI SOC analysts deliver their strongest results when they're paired with:

- Deliberate noise reduction before AI deployment

- Focus on investigation-heavy alerts

- Clear governance boundaries with human-in-the-loop and human-on-the-loop strategies

When implemented with care, AI doesn't replace SOC teams but gives them space to operate at a higher level, focusing on judgment and communication rather than repetitive tasks.

Ready to see this model in action? Try our self-guided demo of Dropzone AI, a live environment that you can explore to see how an AI SOC analyst can fit into your own operations.

.png)