Threat hunting has always been one of the most effective practices in cybersecurity. It is also one of the most expensive to maintain. A single manual hunt takes 10 to 20 hours. Building and testing hypotheses across multiple data sources requires sustained analyst focus that most security teams cannot spare. According to the SANS 2025 Threat Hunting Survey, 61% of organizations cite staffing shortages as the top barrier to running sophisticated threat hunting programs. The consequence: most organizations hunt infrequently, or not at all.

AI threat hunting is reshaping that equation in 2026. It uses artificial intelligence to automate the search and correlation work that consumes the majority of every hunting cycle, while analysts retain control of hypothesis-driven threat hunting and strategic decision-making. AI is not replacing the analyst's judgment. It is removing the bottleneck. The transformation is happening across four dimensions: speed, detection capability, threat intelligence operationalization, and accessibility.

From Manual Hunts to Automated Threat Hunting

The traditional threat hunting workflow is sequential and slow. An analyst forms a hypothesis based on threat intelligence or environmental risk, then queries data sources one at a time: SIEM (Security Information and Event Management) logs, EDR (Endpoint Detection and Response) telemetry, cloud audit trails, identity provider records. Each query requires manual correlation with the last. Documenting findings and building a coherent investigation timeline adds more hours. A thorough hunt against a single hypothesis routinely takes a full day or more.

How does automated threat hunting work?

AI-augmented hunting compresses this cycle by running federated searches across all connected data sources simultaneously. The analyst still forms the hypothesis and defines what to look for. The AI handles the search, pattern matching, and initial correlation across every tool in the stack at machine speed. What took 10 to 20 hours of manual query-and-correlate work now takes approximately one hour.

This is not a theoretical improvement. According to the IBM Cost of a Data Breach 2025 report, organizations that deploy AI and automation across their security operations reduce the breach identification and containment lifecycle by 80 days on average. Speed in detection compounds: faster identification means smaller blast radius, less data exfiltration, and simpler remediation.

The division of labor is clear. AI handles the search. Analysts keep the strategy.

Machine Learning Threat Detection Beyond Rules and Signatures

Rule-based detection systems catch known threats with known signatures. That model breaks down against adversaries who use legitimate system tools to operate. Living-off-the-land (LOTL) techniques, where attackers use PowerShell, WMI, scheduled tasks, and remote desktop rather than custom malware, do not trigger traditional signature-based detection. These techniques are not edge cases. In the SANS 2025 Threat Hunting Survey, 76% of respondents reported encountering living-off-the-land techniques attributed to nation-state actors in the past year.

What can machine learning detect that signatures miss?

ML models trained on behavioral baselines detect patterns that signature-based systems cannot:

- Unusual process execution chains that indicate hands-on-keyboard activity

- Abnormal authentication sequences across users, systems, and time windows

- Lateral movement patterns that deviate from established baselines

- Data staging behaviors that precede exfiltration

MITRE ATT&CK provides the behavioral taxonomy that makes AI-augmented hunting systematic rather than ad hoc. ATT&CK v18 catalogs 691 Detection Strategies and 1,739 Analytics, all organized around TTP-level behavioral detection (MITRE ATT&CK v18, October 2025). AI systems trained on ATT&CK technique profiles can map anomalous behavior to known adversary TTPs (Tactics, Techniques, and Procedures) automatically, turning the framework from a static reference manual into an active detection engine. When an AI system flags an unusual scheduled task creation pattern, it can simultaneously map that behavior to MITRE ATT&CK T1053 (Scheduled Task/Job) and surface related techniques the adversary is likely to use next.

AI does not replace signatures and rules. It catches what signatures miss.

Operationalizing Threat Intelligence at Machine Speed

Better detection means nothing if the threat intelligence feeding it arrives too late. Published threat intelligence has a shelf life problem. When a new advisory drops, the traditional workflow requires an analyst to read the report, extract relevant indicators and behavioral patterns, manually build hunt queries, and run them across data sources. That process takes days to weeks. Meanwhile, attackers are already in motion.

The speed gap is structural. According to the Verizon 2025 Data Breach Investigations Report, attackers achieve mass exploitation of CISA Known Exploited Vulnerabilities within a median of 5 days of disclosure, while organizations take a median of 32 days to remediate those same vulnerabilities. If it takes your team a week to translate a threat advisory into an active hunt, you are searching for adversaries who had a five-day head start before the advisory was even published.

How does AI turn threat intelligence into active hunts?

AI-augmented threat intelligence operationalization changes this timeline from days to minutes. New threat intelligence is ingested, parsed into behavioral indicators, and translated into hunt queries automatically. AI runs those hunts across the full security stack without waiting for an analyst to build and execute each query manually. The same process applies to curated hunt packs for emerging threats and vulnerabilities: instead of sitting in a queue, they execute continuously.

When attackers move in days, threat intelligence that sits unactioned for weeks is intelligence wasted.

Making AI Threat Hunting Accessible to Every SOC

The staffing constraint is not temporary. Cybersecurity workforce shortages are structural, and the organizations that need proactive threat hunting the most, those with the highest risk exposure, are often the same ones that cannot dedicate analysts exclusively to hunting. The 61% staffing barrier reported by SANS is not a problem that hiring alone will solve.

AI-augmented hunting changes the accessibility equation in three ways:

- Lowers the skill barrier. Analysts who lack dedicated threat hunting experience can direct AI systems using natural language instructions and pre-built hunt templates rather than writing complex queries from scratch.

- Makes hunting continuous. Manual programs operate on cycles: quarterly hunts, monthly exercises, or ad hoc investigations triggered by incidents. AI-augmented systems run hunts continuously, testing hypotheses around the clock across all connected data sources.

- Scales without headcount. The same AI system that runs one hunt can run ten in parallel. Adding coverage does not require adding people. It requires adding hypotheses.

AI augmentation is not about replacing threat hunters. It is about making threat hunting accessible to teams at their current staffing levels, so the organizations that need hunting the most are no longer the ones doing it the least.

Key Takeaways

- AI compresses manual threat hunts from 10 to 20 hours to approximately one hour by automating federated searches across all connected data sources.

- Machine learning detects behavioral anomalies, including living-off-the-land techniques, lateral movement, and data staging, that rule-based detection misses. 76% of SANS 2025 respondents reported encountering LOTL techniques from nation-state actors.

- MITRE ATT&CK v18 catalogs 691 Detection Strategies and 1,739 Analytics built around TTP-level behavioral detection. AI systems trained on ATT&CK technique profiles turn this framework into an active detection engine.

- AI operationalizes threat intelligence in minutes instead of days, closing the gap between published advisories and active defense.

- 61% of teams cite staffing as the top barrier to hunting. AI augmentation makes hunting accessible without adding headcount.

- The analyst's role shifts from manual search to strategic direction: forming hypotheses, coaching AI systems, and acting on findings.

Threat hunting in 2026 looks different from threat hunting three years ago. AI has not replaced the analyst. It has removed the bottleneck that prevented most teams from hunting at all. The speed gap between attackers and defenders is narrowing because AI handles the repetitive search work that consumed entire analyst days. The accessibility gap is closing because hunting no longer requires a dedicated team of specialists. And the intelligence gap is shrinking because threat advisories translate into active hunts in minutes, not weeks. The organizations investing in AI-augmented hunting now are the ones that will have continuous, scalable hunting programs running when the next wave of threats arrives.

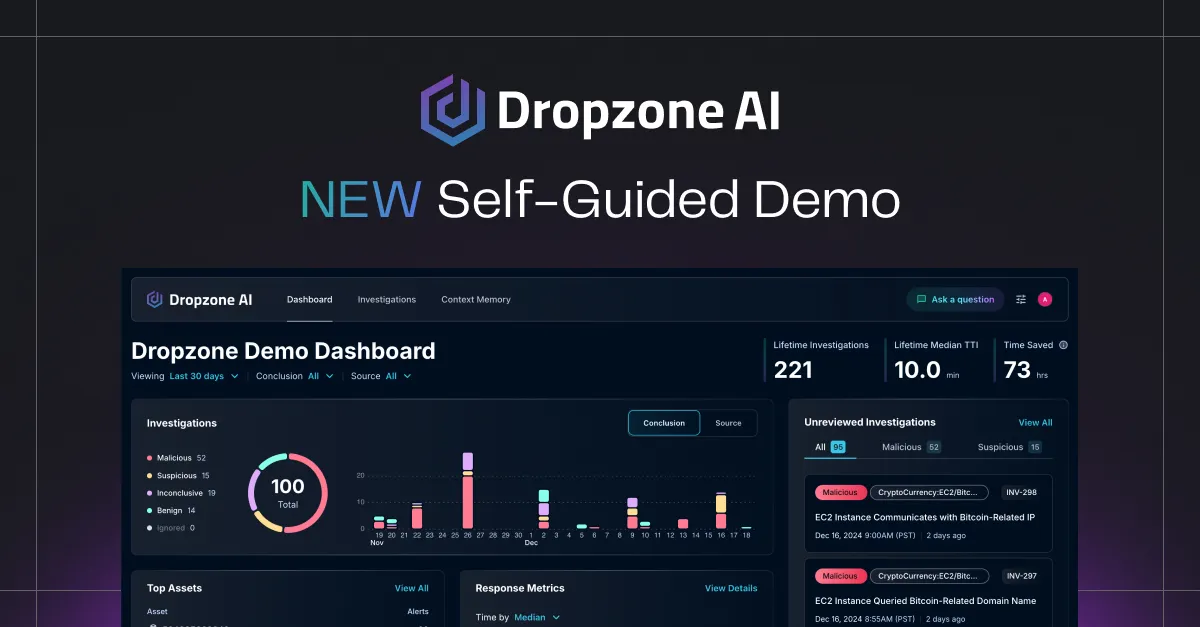

Dropzone AI's AI Threat Hunter, launching in Q2 2026, is designed to automate the search and analysis phases of the hunting cycle so analysts can focus on generating hypotheses and acting on findings.

.png)