Introduction

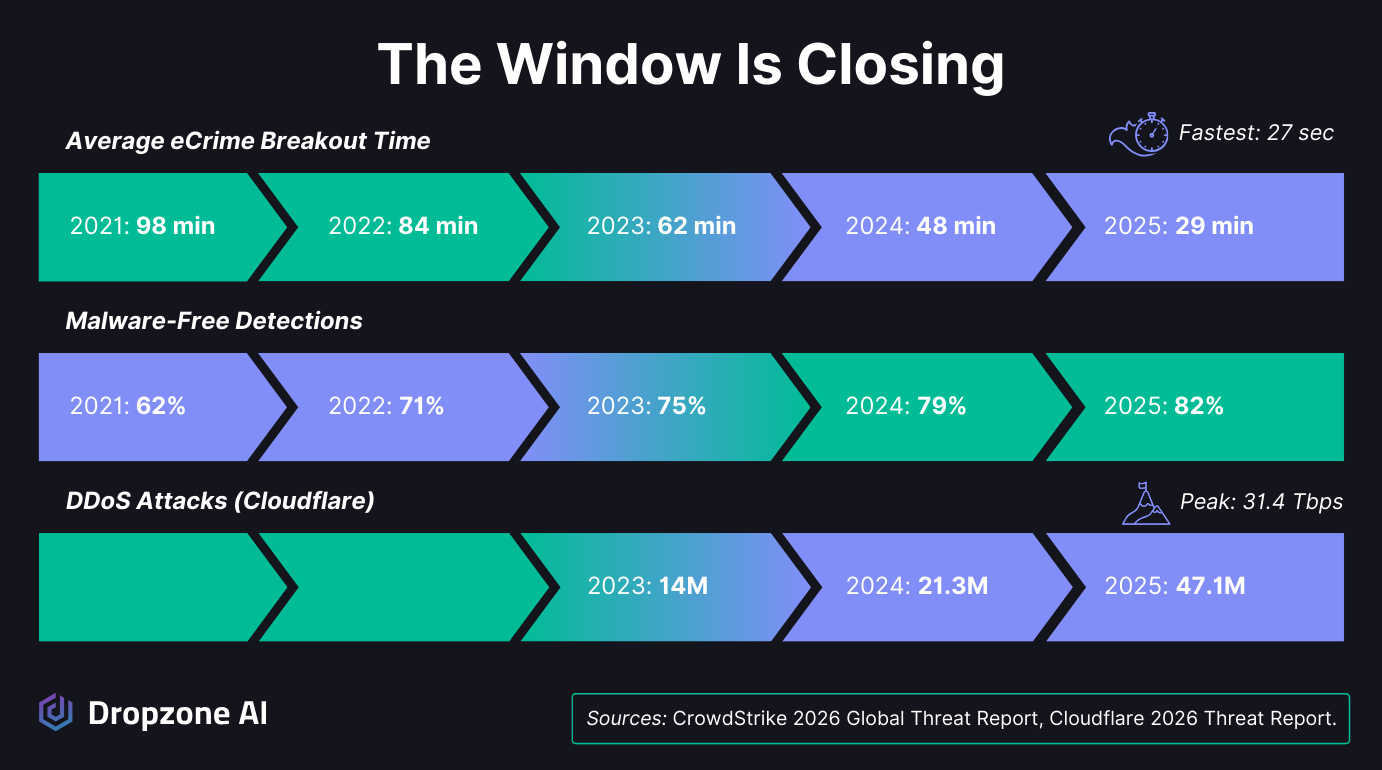

Every major threat report from early 2026 is saying the same thing: AI has industrialized cybercrime. An entire ecosystem of cybercriminals developed around ransomware over the past 10 years, from initial access brokers to affiliate systems. But attackers still relied on skill and largely manual workflows. AI changes this. Unsophisticated actors are compromising hundreds of devices across dozens of countries with AI-generated toolkits. LLM agents are producing working zero-day exploits for under $50. Breakout times have dropped to 29 minutes, with the fastest at 27 seconds.

And this is only what we can see from attackers using public AI services. Adversaries who host their own models leave no telemetry, so every number in every report is a floor, not a ceiling. If your team is still relying on manual triage and reactive investigation, the math no longer works. Here's what the latest research tells us about how attackers are scaling up, why reactive security can't keep pace, and what it actually takes to fight back.

Why Doesn't "Sophisticated" Matter Anymore?

Sophistication no longer predicts damage. The question that matters now is how much disruption an attacker achieved relative to the effort they spent, and AI has collapsed that ratio in the attacker's favor.

.png)

How has "clever" stopped being the measure of a threat?

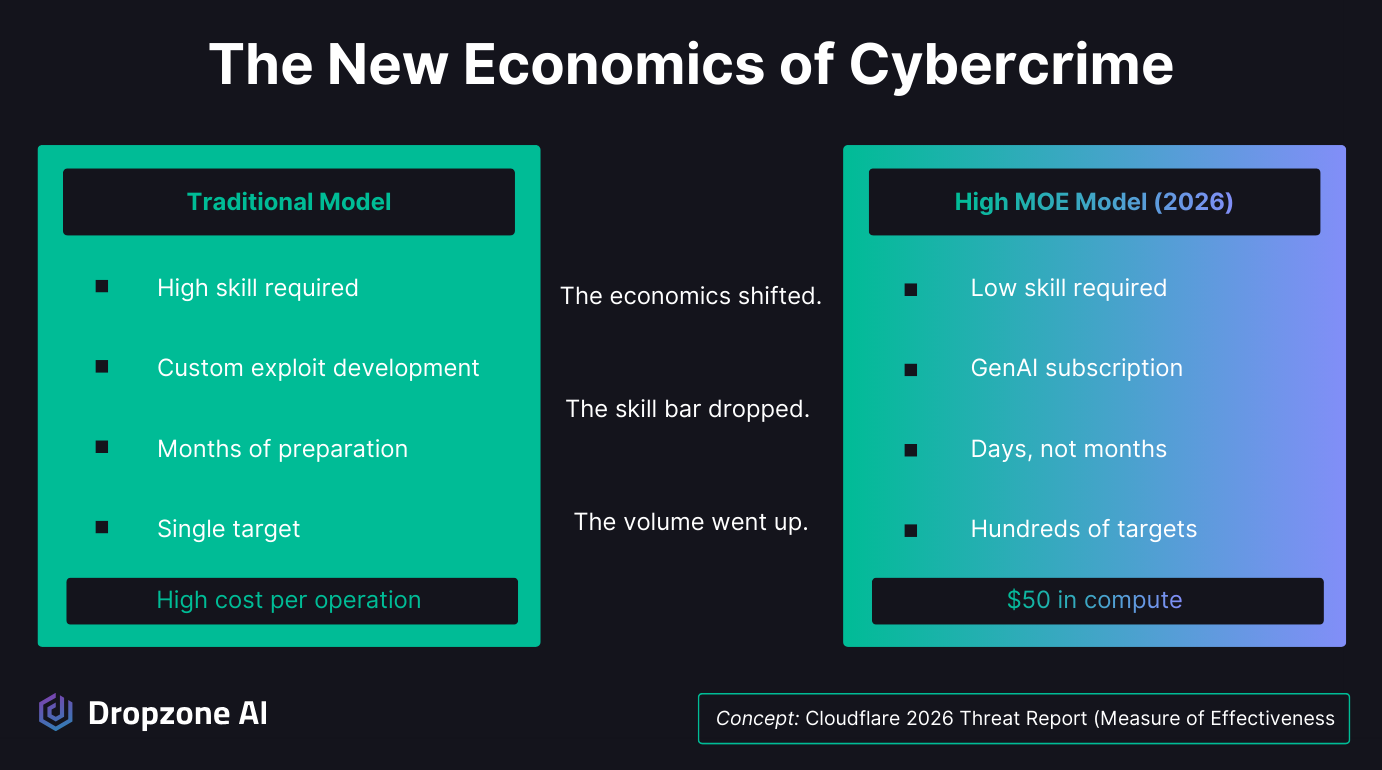

For years, the security industry measured danger by the sophistication; the more elegant the exploit, the more novel the zero-day, the scarier we considered the threat. That thinking is outdated. Cloudflare's 2026 Threat Report puts a name to what many of us have been feeling: the Measure of Effectiveness, or MOE.

Instead of asking how technically impressive an attack is, MOE asks a simpler question. How much disruption did the attacker achieve relative to how much effort they put in?

That shift in framing changes everything; an attacker doesn't need a custom exploit chain that took months to build. A cheap GenAI subscription can automate credential harvesting across thousands of targets in just days.

The cost of finding and weaponizing software vulnerabilities has been commoditized. What used to take a team of skilled operators now takes a credit card and a prompt. When you look at it through that lens, volume and speed matter much more than technical elegance.

This is what every major threat report from early 2026 is confirming, just from different angles:

- CrowdStrike tracked AI usage across the entire kill chain, with resource development up 109% and execution up 134% year over year.

- Amazon caught a single, likely unsophisticated actor compromising over 600 FortiGate devices across 55+ countries using entirely AI-generated tools.

- Sean Heelan's research showed that LLM agents can produce over 40 working exploits for a zero-day vulnerability at roughly $50 in compute costs.

Different methodologies, different datasets, same takeaway: the economics of cybercrime have shifted, and understanding that shift is the first step toward adapting your defenses.

Why is every AI-attack stat a floor, not a ceiling?

The published numbers only capture adversaries using commercial AI services like OpenAI’s ChatGPT and Anthropic’s Claude. Attackers who self-host open-source models leave no telemetry, so the real scope is larger than any report can show.

There's an important nuance worth calling out. Every stat mentioned above comes from attackers who use publicly available commercial AI services. CrowdStrike can track AI-augmented tactics because the actors are using tools with observable footprints. Amazon caught the FortiGate campaign because the actor relied on commercial GenAI platforms. Cloudflare's data comes from processing roughly 20% of the world's internet traffic, which provides one of the largest visibility windows into global threats.

What this doesn't capture is attackers who host their own models. Anyone running open-weight LLMs on private infrastructure doesn't leave the same telemetry trail. No API logs, no usage patterns, no observable data for vendors to report on.

The ability to self-host is widely accessible and becoming cheaper every month, so it's reasonable to assume that some portion of AI-augmented activity occurs outside the window these reports cover.

That doesn't mean the numbers are useless; they're directionally accurate and backed by strong data. But it's worth treating them as a baseline rather than the full picture.

How Are Attackers at Every Skill Level Using AI to Scale Up?

AI has closed the skill gap. Unsophisticated actors now run campaigns that previously required specialist teams, and the middle tier has been pulled up to capabilities that used to belong to nation-states.

Why don't attackers need to be skilled hackers anymore?

Amazon's threat intelligence team documented a case that really drives home the MOE concept. A financially motivated, Russian-speaking actor used commercial GenAI services to compromise over 600 FortiGate devices across 55+ countries in about five weeks. They didn't exploit any vulnerabilities.

They found exposed management ports, tested weak credentials, and let AI do the heavy lifting:

- Scanning and reconnaissance

- Credential extraction and testing

- Config parsing to map target environments

- VPN automation for persistence

All AI-generated. When they hit a hardened environment, they didn't bother trying to break through. They just moved on. Why waste effort on hard targets when there are hundreds of easy ones?

Cloudflare documented the same pattern in the SaaS world. Their investigation into the GRUB1 threat actor identified an unsophisticated individual who used automated tools to scan code repositories for credentials and then used LLMs to navigate Salesforce environments they'd never seen before.

The actor used AI to locate the most valuable database tables in production instances within moments of gaining access. One compromised integration cascaded into breaches across hundreds of tenants.

Your security is only as strong as the most over-privileged integration in your stack, and attackers now have AI copilots that can figure out how to exploit those connections on the fly.

CrowdStrike puts these cases into the bigger picture. AI usage is showing up across the entire kill chain:

- Resource development up 109% year over year

- Execution up 134% year over year

- Defense evasion up 88% year over year

The groups getting the biggest boost aren't the elite nation-state teams. It's the middle tier, operators who previously lacked the skill for complex campaigns. AI closed that gap. Social engineering is scaling, too.

Fake CAPTCHA lures surged 563%. Malware, convincing phishing emails, and privilege-escalation techniques that once required real expertise are now just a few prompt iterations away.

How is AI commoditizing exploit development?

Exploit research has always been the most expensive, most specialized part of the offensive lifecycle. Recent research shows LLM agents can now produce working zero-day exploits for roughly $50, which collapses the cost structure of the hardest work in offense.

The examples above are about AI amplifying things that attackers already knew how to do. Scanning, credential stuffing, social engineering. AI made them faster and cheaper.

But security researcher Sean Heelan's work points to something bigger: AI is starting to handle tasks that previously required the rarest skills in cybersecurity.

Heelan showed that LLM agents can generate working exploits for zero-day vulnerabilities, bypassing modern mitigations including:

- ASLR (Address Space Layout Randomization)

- Non-executable memory

- Control-flow integrity

- Hardware shadow stacks

Over 40 distinct working exploits across six scenarios.

The hardest one, a seccomp sandbox with full RELRO, CFI, and hardware shadow stack, took about three hours and cost roughly $50. His argument is that the limiting factor on exploit development will soon be token throughput, not headcount.

That matters because exploit research has always been the most expensive, most specialized part of the offensive lifecycle. If AI commoditizes that, the ripple effects will be felt everywhere.

Cloudflare reached the same conclusion from the other side. Their product security team used an AI tool called OpenCode to audit the product and uncovered a critical exploit chain that led to remote code execution. The cost comparison was striking: traditional fuzzing requires large compute farms and dedicated researchers.

AI collapsed that to a fraction of the time and expense. They framed it as "offense by the system" versus "security by the system." The tools are the same on both sides. CrowdStrike's 42% year-over-year increase in zero-day exploitation suggests attackers are already well ahead. The question is whether defenders are keeping pace.

Why Can't Reactive Security Keep Up?

Speed and stealth have combined to make manual investigation structurally inadequate. Attackers move inside networks in minutes, and they look like legitimate users while they do it.

How fast are attackers actually moving?

Faster than humans can respond. The average eCrime breakout window has compressed to minutes, and data exfiltration can begin almost immediately after initial access.

CrowdStrike measured the average eCrime breakout time at 29 minutes this year, with the fastest at 27 seconds; in one case, data exfiltration began within 4 minutes of initial access. That's the entire window to detect, understand, and act, which for most SOC teams running manual workflows isn't realistic.

Adversaries are also deliberately targeting the surfaces where visibility is weakest and response is slowest:

- Identity systems

- SaaS applications

- Edge devices

- Unmanaged hosts

CrowdStrike tracked the sector shifts:

- China-nexus activity up 38% overall

- Logistics attacks up 85%

- Telecom attacks up 30%

- Edge devices involved in 40% of intrusions

- Cloud-conscious intrusions up 37%, with a 266% increase from state-nexus actors

The attack surface is expanding faster than most organizations can staff for.

Why do modern attacks look like normal business activity?

Because attackers have shifted from exploiting software to exploiting identity. Most intrusions now run through valid credentials and approved integrations, so they don't look like attacks until damage is done.

The speed gap would be manageable if intrusions were easy to spot, but they increasingly aren't. CrowdStrike found that 82% of detections were malware-free in 2025, up from 51% in 2020. Attackers are operating through valid credentials, trusted identity flows, and approved SaaS integrations. They look like employees doing their jobs.

Cloudflare's data shows how this works at scale. 94% of login attempts were from bots, and 63% of human logins involved already-compromised credentials. Infostealers like LummaC2 harvest live session tokens, bypassing MFA by stealing the authenticated session after the user has already logged in.

Cloudflare called it the shift from "attacking the box" to "attacking the session." The Verizon 2025 DBIR confirmed that 54% of ransomware attacks traced back to infostealer-enabled credential theft.

Supply chain compromises take it a step further. CrowdStrike documented PRESSURE CHOLLIMA executing the largest single financial theft ever, $1.46 billion in cryptocurrency, through trojanized software in a compromised supply chain. Malicious npm packages were downloaded millions of times before they were detected.

These attacks don't trigger your EDR or light up your SIEM. They look like trusted software doing what it's supposed to do, which means catching them requires understanding behavior and context at a pace that manual investigation can't sustain across the alert volumes most teams deal with daily.

How Does Dropzone Help You Fight Back?

Match the adversary's AI investment with AI-powered investigation. With a Dropzone AI SOC Analyst, every alert gets a full senior-analyst-level investigation in minutes, so your team is never deciding which alerts they have time for.

AI-made attacks are cheap at scale; that volume moves at breakout speeds measured in minutes, and the intrusions look like normal activity because they use valid credentials rather than malware. A SOC built around analysts manually working a queue wasn't designed for that combination.

Dropzone's AI SOC Analyst reasons through every alert as a senior analyst would, pulling context from across your stack and reaching a conclusion in minutes. Every alert gets a full investigation, not just the ones your team has time to get to. Case in point: Dropzone AI recently investigated medium-severity alerts from Microsoft Defender that wouldn’t typically be prioritized in an analyst queue, but which turned out to be the first sign of compromise in the recent Axios supply chain attack.

When 82% of intrusions look like legitimate activity, what catches them is understanding context and recognizing when something normal isn't. Dropzone does that at machine scale, 24/7, directed by your team's strategy. Your analysts move from frontline triage to the work that actually needs them.

Conclusion

The threat research from early 2026 all points in the same direction. Cybercrime has industrialized, the economics favor the attacker, and the attacks themselves look like normal business activity. But the measure of effectiveness (MOE) equation works for defenders too. AI that investigates every alert thoroughly and operates around the clock without being bottlenecked by headcount is how security teams close the gap. Curious what that looks like in practice? Explore real investigations in the self-guided demo, a live environment you can walk through on your own.