You ran a solid hunt last quarter. Found a misconfigured service account with excessive privileges. Wrote it up, handed it to the detection engineering team, moved on. Good outcome.

But here's the thing: that was three months ago. The program hasn't grown since. Same cadence, same analyst, same handful of hypotheses recycled from last year's threat report. The hunts that do happen are good. There just aren't enough of them, and the ones that come back clean get filed and forgotten.

That pattern isn't a skill problem. It's a structural one. The seven mistakes below show up in threat hunting programs of every size, and fixing them doesn't require more headcount. It requires different decisions about how hunts get structured and executed.

What Are the Most Common Threat Hunting Mistakes?

1. Hunting only from IOCs instead of hypotheses

Searching for known bad indicators, specific hashes, IP addresses, domains, is valuable but it isn't threat hunting. It's detection. You're looking for things you already know about.

Proactive threat hunting starts from a behavioral hypothesis: "Is there evidence of credential dumping via LSASS access in this environment over the last 30 days?" That question targets a technique, not an artifact. It catches attackers who change their tooling but not their behavior.

The fix is straightforward. Start every hunt from a hypothesis tied to a MITRE ATT&CK technique, not from a list of IOCs. IOC sweeps still have value, but they're a separate activity from hunting.

2. Not documenting hypotheses or hunt results

A hunt without documentation can't be repeated, measured, or improved. Clean results get lost, so the same ground gets re-covered six months later without anyone realizing it.

The fix: use a structured hypothesis format for every hunt. Technique being tested, data sources queried, expected evidence, timeframe, and result (positive finding or coverage confirmation). When every hunt produces a documented output, the program builds institutional knowledge instead of relying on individual memory.

3. Hunting too infrequently to build coverage

Most teams hunt quarterly at best. Some hunt once or twice a year. The reason is usually time: the SANS 2025 Detection and Response Survey found that 73% of security teams cite excessive false positives as their top detection challenge. When the alert queue consumes the day, proactive hunting gets deferred.

The problem with infrequent hunting is that coverage gaps persist unvalidated between hunts. A lot changes in 90 days: new infrastructure, new attack techniques, new blind spots. Quarterly hunts can't keep up.

The fix is reducing the cost per hunt so frequency becomes sustainable. When AI agents handle hunt execution, what used to take up to 40 hours of analyst time compresses to roughly one hour. Quarterly cadence becomes weekly without adding headcount.

4. Ignoring clean hunt results

A hunt that finds no evidence of the tested technique still tells you something valuable: your detection coverage for that technique, in that timeframe, across those data sources, held up. That's posture confirmation, and it's one of the most actionable outputs a hunting program produces.

Teams that dismiss clean results as "nothing found" lose that signal. They can't tell the difference between "we've validated coverage for this technique" and "we've never tested it."

The fix: treat every hunt result, positive or negative, as detection validation data. A clean result closes a gap in your coverage map. A positive finding opens an investigation. Both advance the program.

5. Relying on a single data source

Hunting only in the SIEM means you're missing what lives in EDR telemetry, identity provider logs, cloud workload data, and threat intelligence feeds. Attackers don't constrain themselves to one layer of your stack. Hunts shouldn't either.

The practical barrier is access: querying across four platforms takes four different query languages, four different interfaces, and four times the effort. Most analysts default to the source they know best.

The fix: federated query execution across all available data sources. This is one of the areas where AI agents change the math. Agents that support 90+ integrations query across the full stack simultaneously without the analyst switching tools or writing platform-specific queries.

6. Treating threat hunting as an analyst side project

When hunting competes with the alert queue for the same analyst's time, the queue always wins. Alerts are urgent. Hunts are important. Urgency beats importance every time.

Programs that depend on analysts finding spare hours between triage shifts produce inconsistent results. Some weeks there's time for a hunt. Most weeks there isn't.

The fix: separate execution from strategy. Under the agentic SOC model, AI agents handle alert investigation and hunt execution independently. The AI SOC Analyst handles the alert queue. The AI Threat Hunter, coming Summer 2026, handles hypothesis-driven hunts. Analysts own hypothesis development and act on findings. The two workstreams stop competing for the same person's time.

7. Never measuring hunt outcomes

Without metrics, you can't demonstrate value, justify investment, or identify where the program is improving. "We ran some hunts" isn't a report your CISO can use.

The metrics that matter for a hunting program:

- Hypotheses tested per quarter. Volume of coverage validation.

- Coverage gaps identified. Where your detection has blind spots.

- Detections created from hunt findings. Hunts that improve the detection layer.

- Mean time from hypothesis to finding. How quickly you can validate or refute a hypothesis.

The fix: build measurement into the hunt workflow from the start, not as a retrospective. When AI agents produce structured findings (technique assessed, data sources queried, evidence status, coverage gaps), the raw data for these metrics builds automatically.

How Do These Mistakes Affect Your Security Posture?

These seven mistakes compound. A program that hunts infrequently, from IOCs, with no documentation and no measurement, isn't really running a threat hunting program. It's running ad-hoc searches when time allows.

The result: coverage gaps persist undetected. Detection engineering doesn't improve because hunt findings aren't feeding back into the detection layer. The program can't demonstrate ROI because there's nothing to measure. And when leadership asks what the hunting program has accomplished this year, the answer is vague.

What does a mature threat hunting process look like?

It's hypothesis-driven, documented, measured, and frequent. The execution layer and the strategy layer are separated: agents or dedicated resources handle execution, while analysts own hypothesis development, threat modeling, and acting on findings.

Coverage validation is the primary output, not just threat detection. Every hunt produces a documented result that either confirms coverage or surfaces a gap. Over time, the program builds a map of what's tested and what isn't, which gives you something no amount of tool-buying provides: confidence in your coverage.

How Can AI Agents Help Avoid These Threat Hunting Mistakes?

The seven mistakes split into two groups. Mistakes 3, 5, 6, and 7 are operational. They're about how the program runs day-to-day, and AI agents change the economics directly. Mistakes 1, 2, and 4 are structural. They're about how the program is designed, which is strategic work that depends on judgment and an understanding of the broader organization. Agents can streamline operations, but they can't fix a broken program.

Where AI agents change the operational layer:

- Frequency (mistake 3). Dropzone's AI Threat Hunter, coming Summer 2026, compresses up to 40 hours of hunt execution to roughly an hour. The cost barrier to running more hunts drops, and weekly cadence becomes practical.

- Coverage (mistake 5). AI agents query across 90+ integrations simultaneously. No single-source limitation. No switching between platforms.

- Dedicated resources (mistake 6). Agents run hunts without competing with the alert queue. The AI SOC Analyst (available now) handles alerts. The AI Threat Hunter (Summer 2026) handles hunts. Different agents, different workstreams.

- Measurement (mistake 7). Structured findings from agents include technique assessed, data sources queried, coverage gaps, and evidence status. The data for program metrics builds as a byproduct of every hunt, including the documentation that feeds back into mistake 2.

Where the work stays structural:

Mistakes 1, 2, and 4 are program-design choices. Hunting from hypotheses (rather than IOCs), documenting results consistently, and treating clean results as detection-validation data are decisions about how the program is set up. Agents can produce structured outputs that make documentation effortless and surface coverage findings that make clean results actionable, but they can't decide to run the program that way. That's leadership and strategy work.

Key Takeaways

- Most hunting programs stall from structural mistakes, not skill gaps. The seven mistakes above show up at every program size and maturity level.

- IOC-only hunting, poor documentation, and infrequent cadence are the most common starting points. Fixing these three changes the trajectory of the program.

- Clean hunt results are as valuable as positive findings. Treating them as detection validation data builds your coverage map and gives leadership something concrete to report.

- AI agents handle the operational layer; structural work stays with humans. Hypotheses, documentation discipline, and recognizing the value of clean results are program-design choices. Agents make the operations sustainable. Leadership decides how the program runs.

- A mature hunting program is hypothesis-driven, documented, measured, and frequent. Separate execution from strategy, and the rest follows.

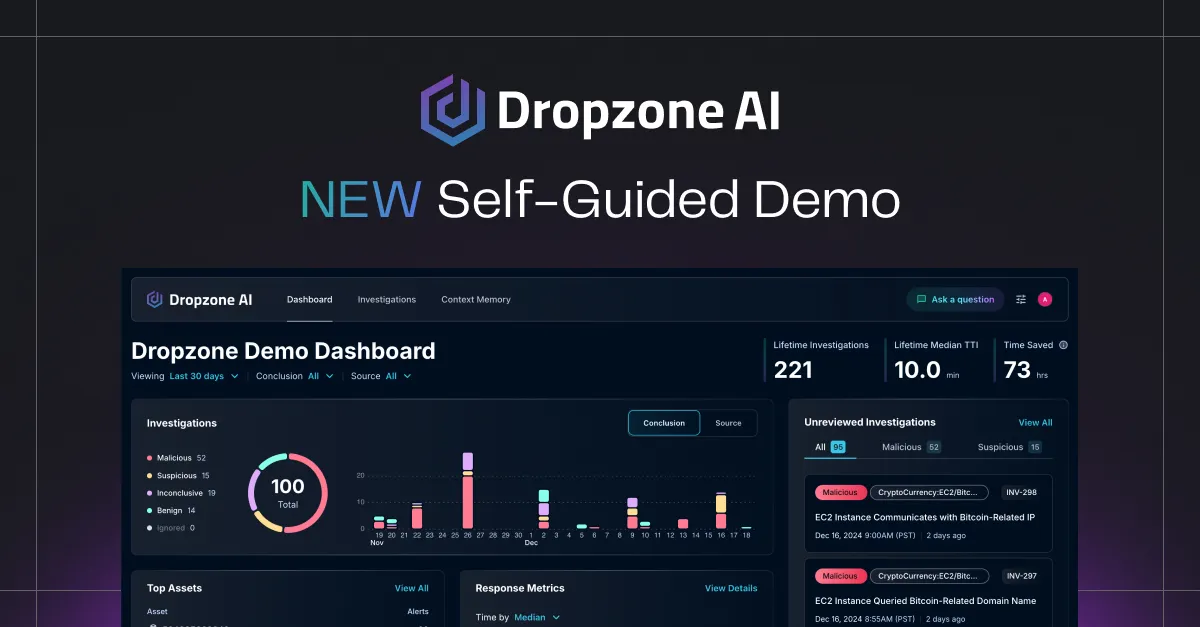

Ready to see how AI agents change the economics of threat hunting? Request a demo or explore how Dropzone AI's 90+ integrations connect to your existing tool stack from day one.