Key Takeaways

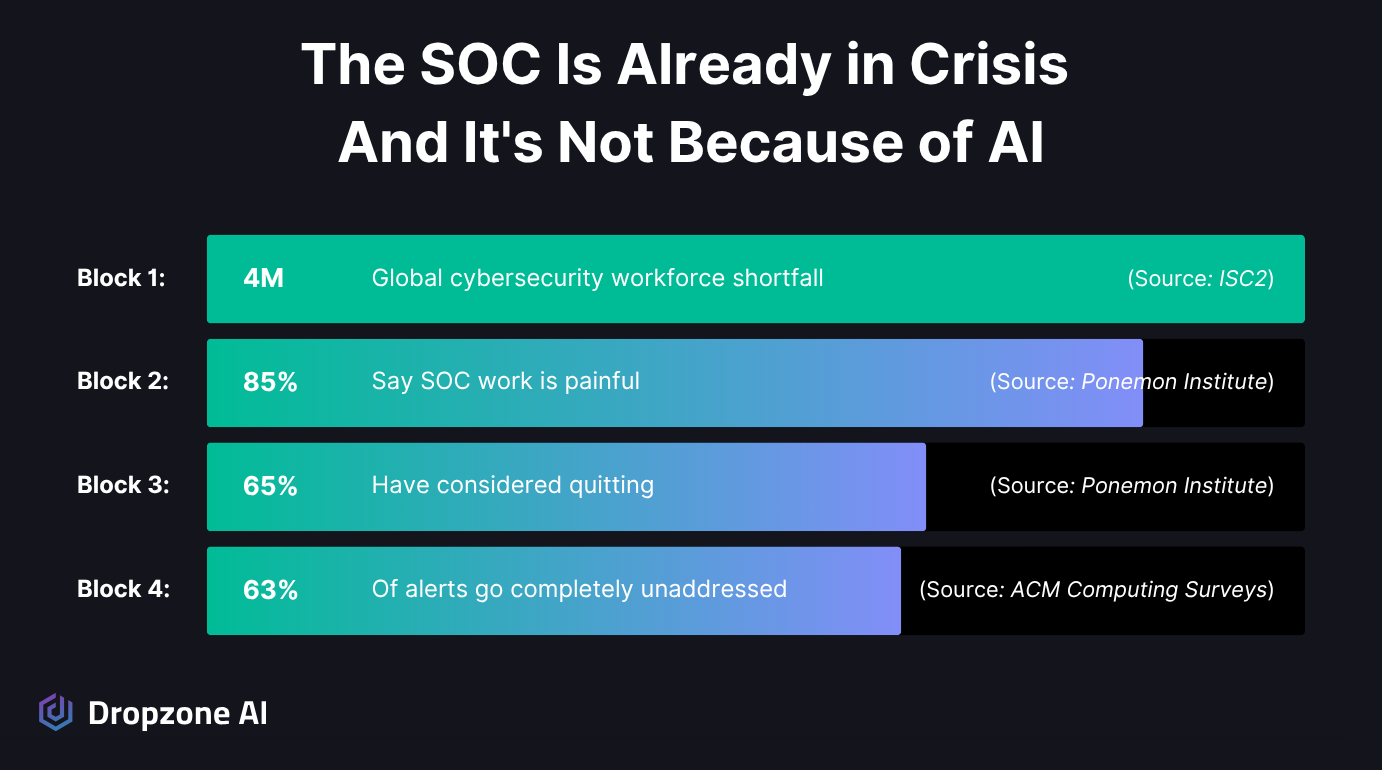

- SOC teams are already in crisis from alert fatigue: 70% of analysts with under five years of experience leaving within three years.

- In a benchmark CSA study of 148 analysts, 94% viewed AI more positively after hands-on use, with zero detractors and significant gains in speed, accuracy, and fatigue resistance.

- The Agentic SOC model keeps humans in the strategy seat while AI handles execution, elevating analysts rather than replacing them.

Introduction

When your employer rolls out a tool called "AI SOC Analyst," the room gets quiet. Is this the thing that replaces me? Is it another half-baked automation that'll dump more false positives on my desk? Both are fair questions.

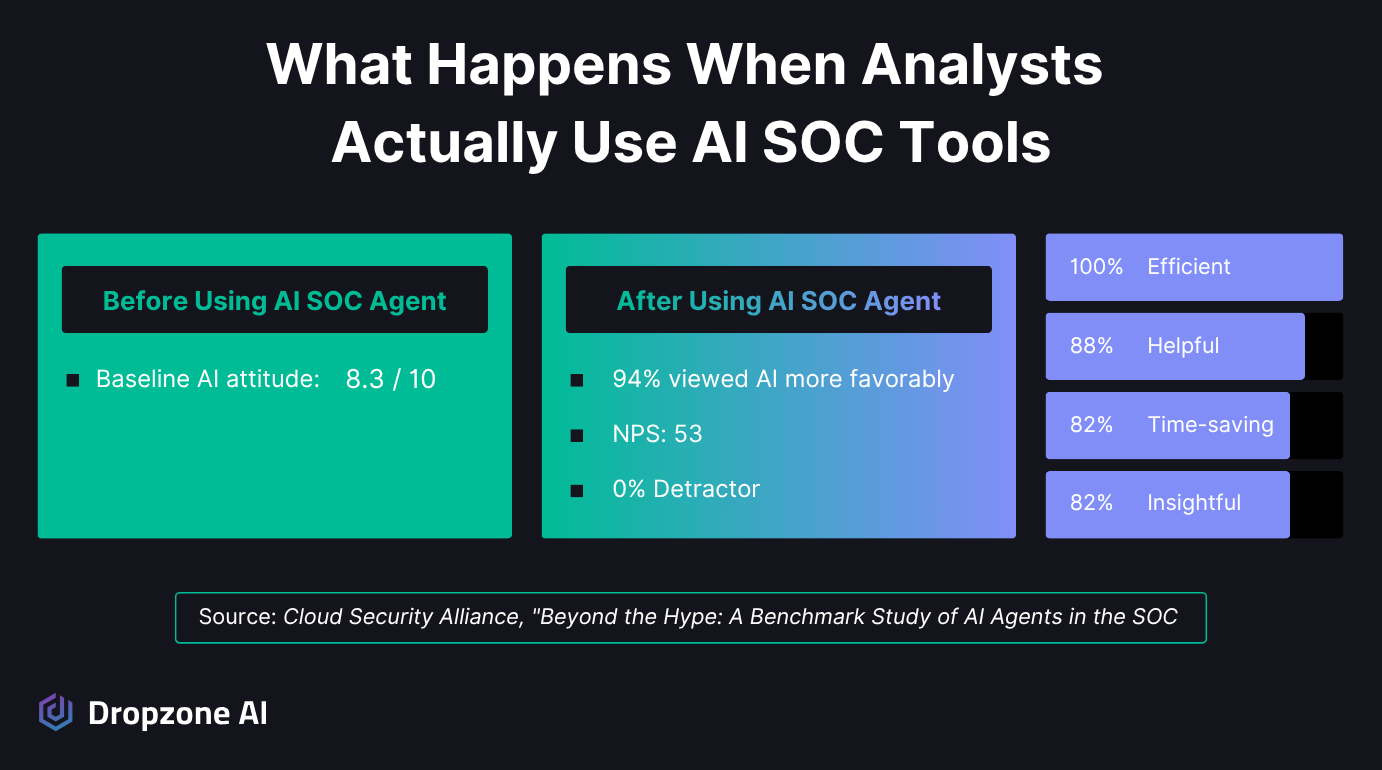

A Cloud Security Alliance study of 148 analysts found that the ones who actually used an AI SOC agent rated AI in cybersecurity 94% more positively afterward, with zero detractors. The article ahead unpacks that study, the SOC conditions that make analysts open to AI in the first place, and the human-in-strategy model that makes these deployments work.

Why Is the SOC Already in Crisis Before AI Arrived?

What's Driving SOC Burnout?

You've seen the turnover. Research published in ACM Computing Surveys found that 70% of SOC analysts with five years or less experience leave within three years, driven by alert fatigue and repetitive investigative work. Average SOC analyst tenure sits at just over two years, and replacing them takes nearly a year.

Your analysts are drowning in noise. Per the same ACM Computing Surveys research, organizations receive an average of 2,992 security alerts daily, with 63% going completely unaddressed.

That's nearly two-thirds of your alert surface going dark. Of the alerts that do get looked at:

- 46% are false positives

- 73% of security teams name false positives as their number-one detection challenge

- 42% of SOC teams admit to dumping all incoming data into their SIEM with no plan for handling it (per the SANS 2025 SOC Survey)

ECS, a top-5 MSSP in North America, ranked #4 on MSSP Alert Top 250 for 2025, is already running 30,000 alerts per month through Dropzone AI, expanding what their existing team can handle without adding headcount. Read the ECS case study.

When nearly half of what your team investigates turns out to be nothing, anything that genuinely filters the noise and reduces the grind deserves evaluation on those merits.

What Happens When SOC Analysts Actually Use AI SOC Agents?

Inside the CSA Benchmark Study: 148 Analysts, Real Investigation Data

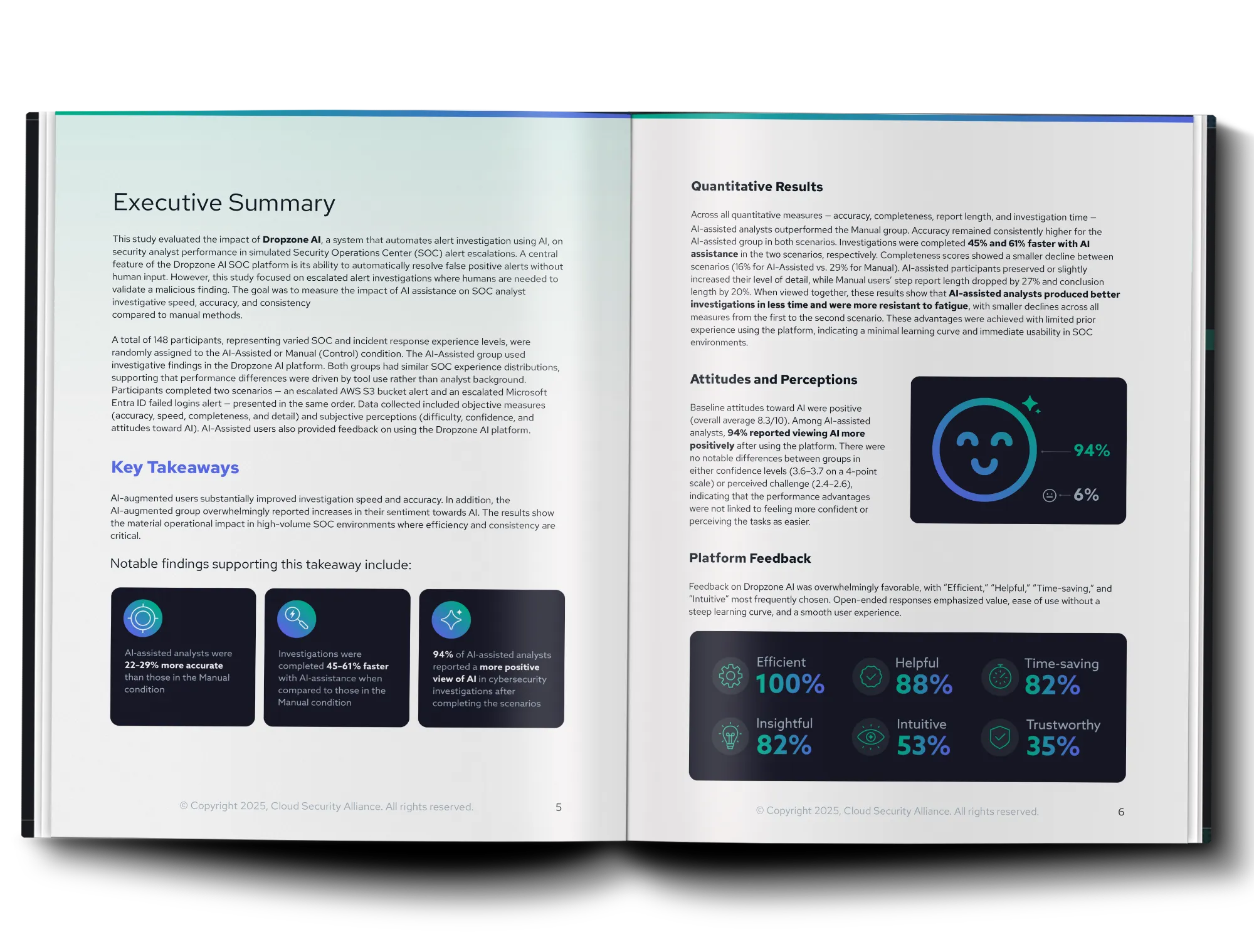

The Cloud Security Alliance ran a controlled benchmark study (commissioned by Dropzone AI) with 148 security professionals randomly assigned to AI-assisted (using Dropzone AI) or manual investigation groups.

Both groups tackled real investigation scenarios involving AWS and Microsoft Entra ID alerts, with the manual group using AWS GuardDuty and Microsoft Sentinel.

The results before vs. after:

- Pre-study attitude toward AI: AI-assisted group rated 8.3/10

- Post-study: 94% viewed AI even more positively

- Net Promoter Score: 53 (53% Promoters, 47% Passives, zero Detractors)

When asked to describe the experience, participants chose:

- "Efficient": 100%

- "Helpful": 88%

- "Time-saving": 82%

- "Insightful": 82%

- "Confusing": only 12%

- "Overwhelming": only 6%

Remember, this was a controlled study where analysts used the tool for the first time and then reported how they felt.

These findings echo what deployed customers see in production. Mysten Labs cut false positives by 90-95% after deploying Dropzone, freeing their team to focus on the strategic work the CSA study flagged as absent from most analysts' days. Read the Mysten Labs case study.

Did AI-Assisted Analysts Just Work Faster, or Did They Work Better?

Both. Your team works faster and more accurately with AI assistance, per the CSA benchmark study:

Fatigue resistance was the more striking finding. Analysts in the Manual group showed a 29% decline in completeness between the two scenarios; the AI-assisted group showed only 16%. Manual report length dropped 27% as analysts got tired; AI-assisted analysts report length actually increased slightly.

The takeaway: Your team's quality normally degrades over the course of a shift. AI-augmented work stays consistent.

Why Does AI Augmentation Work When Humans Stay in Strategy?

What Does the Agentic SOC Model Actually Do With Humans and Agents?

Your peers are already embracing AI while insisting on human oversight. The ISC2 2025 Cybersecurity Workforce Study found that 69% of cybersecurity professionals are engaged in AI adoption, integrated, testing, or evaluating, and 73% said AI will create more specialized cybersecurity skills rather than eliminate them.

Separate ISC2 AI Pulse research found teams are taking a deliberately cautious approach to giving AI independent authority, with human oversight flagged as a central requirement. That combination of enthusiasm and caution is what a mature AI deployment looks like.

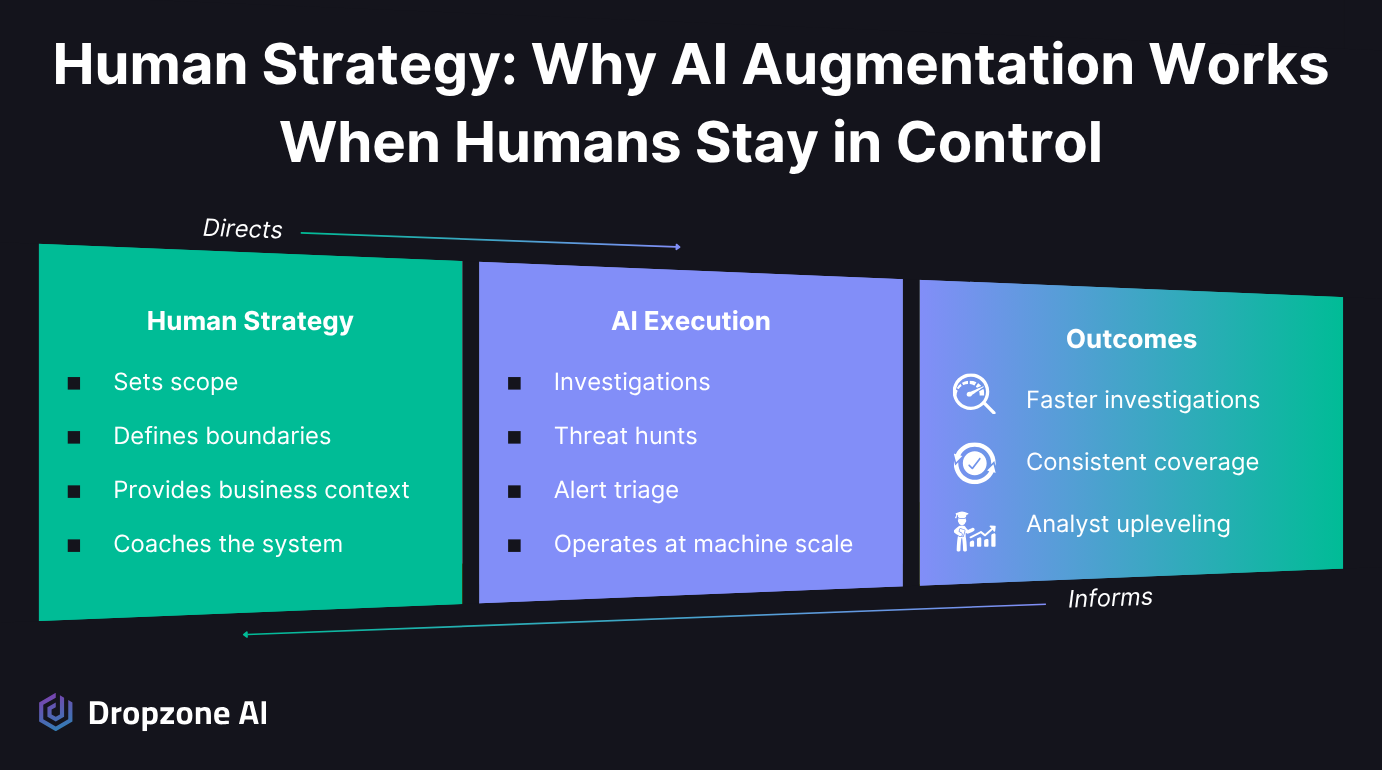

Under the agentic SOC model, humans set the strategy, defining the scope of work, authorization boundaries, and business context, while AI agents conduct investigations within those boundaries. Humans stay in the loop with full review and override capabilities, without blocking the critical path on manual approval for every action.

With Dropzone AI, your analysts coach the system using natural language instructions, custom strategies, and business context. They direct the AI the same way a senior analyst would direct a new hire: giving it investigation parameters, showing it your environment, and setting boundaries for what it can and can't do on its own.

We at Dropzone call this governed autonomy. Your agents execute your strategy autonomously. The AI executes like a trusted teammate that follows your direction.

How Do SOC Roles Shift From Reactive Triage to Strategic Oversight?

The work your team does shifts; the demand for the people doing it doesn't. The WEF Future of Jobs Report 2025 lists network and cybersecurity skills as the second-fastest-growing skill category worldwide through 2030, and 85% of surveyed employers plan to prioritize upskilling their workforce over replacement hiring.

In practice, the shift looks like upleveling across the board:

- Tier 1 analysts take on Tier 2 responsibilities

- Senior analysts recover time for hunt direction, detection engineering, and threat modeling

According to the same ACM research, 75% of analysts currently lack time for strategic work such as threat hunting, and AI augmentation gives that time back. For a longer view of where this leads, Dropzone's Peek Into 2030 walks through a near-future SOC day: engineers coaching AI agents, tuning context memory, and leading threat modeling instead of grinding alerts.

The question for your team isn't whether roles will evolve. They will. The question is whether your analysts spend their time on repetitive alert triage or on the strategic security work they actually signed up for.

Conclusion

SOC analysts embrace AI tools when they actually use them, because those tools attack the burnout and alert fatigue the industry hasn't solved in years. The deployments that work keep humans in the strategy seat, directing AI that handles execution within defined boundaries.

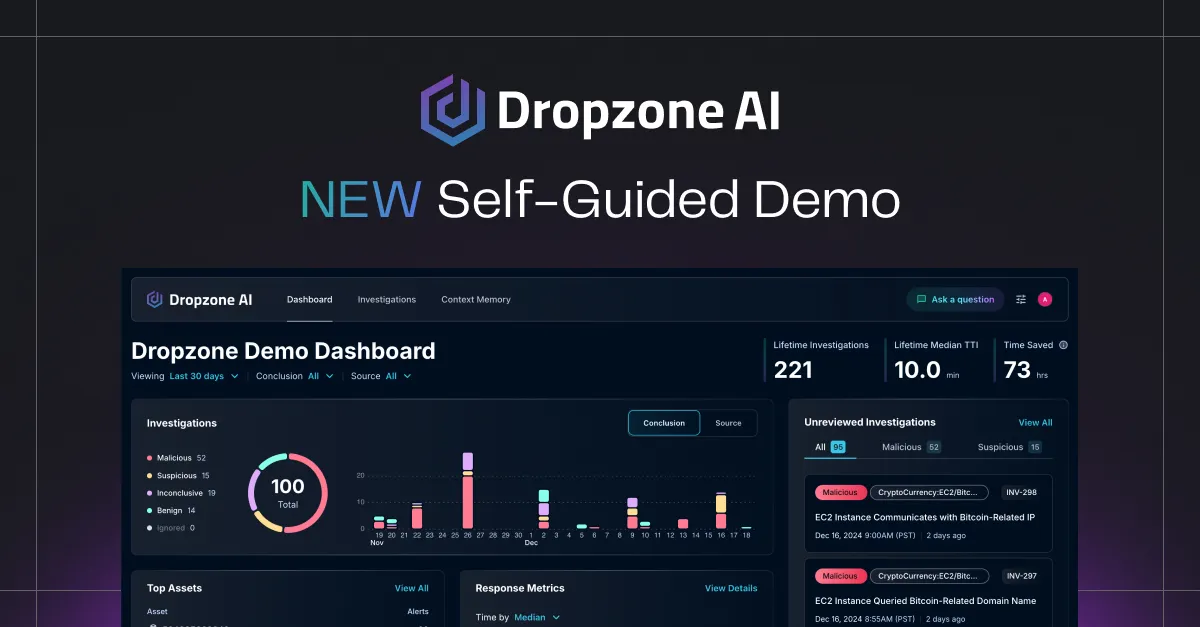

Want to see an AI SOC agent investigate a real alert? Jump into the Dropzone self-guided demo and walk through a live investigation on your own.