You've seen the AI threat hunting demos. Type a hypothesis into a chat interface, and the tool suggests a security information and event management (SIEM) query. You review it, run it, read the summary, interpret what it means. Repeat for the next data source.

Two hours shaved off a 15-hour hunt isn't nothing. But you're still in the loop at every step. The AI makes you faster. It doesn't change what's actually constraining the program.

The 2025 SANS SOC Survey confirms this: 48% of teams described their hunting as partially automated using vendor-provided tools, and the survey found that what's labeled "threat hunting" often amounts to retroactive analysis rather than true hypothesis-driven investigation.

There's a different model where AI agents execute the hunt end-to-end and you get a finding at the other end. The distinction between a copilot that speeds up your workflow and an agentic system that runs the hunt autonomously determines the ceiling of your program: how many hunts you can run, how much analyst time each one consumes, and what becomes possible when the cost-per-hunt drops significantly.

What Are the Three Models of AI in Threat Hunting?

The industry is converging on three tiers for how AI participates in threat hunting.

Tier 1: AI-assisted (the copilot model). AI collaborates at each step:

- Recommends queries based on the hypothesis the analyst provides

- Flags anomalies in data the analyst pulls from each source

- Summarizes results once they come in, saving the analyst write-up time

The analyst reviews and approves each move before work advances. Human-paced, human-directed, human-throughput-constrained.

Tier 2: AI-augmented. AI doesn't just suggest next steps; it enhances the analyst's judgment with capabilities that weren't previously available:

- Behavioral pattern detection across datasets too large for manual review

- Anomaly scoring that surfaces what the analyst should look at first

- Cross-source enrichment that adds context the analyst would otherwise hunt for manually

The analyst still drives the hunt, but their decision-making is sharper because the AI is surfacing things no human could process alone.

Tier 3: Agentic (agent-executed). This is the model shift. The analyst defines the hypothesis. AI agents execute every step between hypothesis and finding:

- Query data sources across the full tool stack simultaneously

- Pull and enrich telemetry with threat intelligence context

- Correlate across sources to identify behavioral patterns and coverage gaps

The analyst receives a finding and decides what to do next. Their active work is at the beginning and the end. The execution layer in the middle runs at agent speed.

Under the hood, agentic hunting follows a five-stage flow once the analyst submits a hypothesis:

- Hypothesis intake. The agent parses the hypothesis and identifies the attack technique, timeframe, and scope.

- Data source targeting. The agent maps which sources contain relevant evidence: process telemetry, authentication logs, network flows, threat intelligence feeds.

- Federated query execution. Queries run across all targeted sources simultaneously. No sequential approval cycle.

- Cross-source correlation. The agent evaluates results across sources, flags behavioral patterns consistent with the technique, and identifies coverage gaps.

- Decision-ready output. The analyst receives the result: technique assessed, sources queried, timeframe, evidence status, and gaps noted.

Any agents that execute this flow need access to the tools already in the analyst's stack, which is why vendor-agnostic integration depth matters. Agents that only query a single platform will miss what lives in the others.

The capacity leap is from Tier 1 to Tier 3. In the copilot model, hunt volume is still gated by analyst time. In the agentic model, agents run execution independently. Analysts aren't removed from the process; they're repositioned within it.

What does the copilot model look like in practice?

A senior threat hunter works a hypothesis: lateral movement via Windows Management Instrumentation (WMI) over the last 30 days. She types the hypothesis in. The tool suggests three SIEM queries. She reviews them, adjusts one, runs all three. The AI summarizes results. She correlates what she sees with what she knows about the environment and decides whether to pivot.

Two to three hours saved off a full-day hunt. She still made every decision about what to query and what the results mean.

What does the agentic model look like in practice?

Same hypothesis. This time, she types it in natural language. AI agents parse the hypothesis, identify the relevant technique (WMI execution, MITRE ATT&CK T1047), target relevant data sources, and execute queries across the SIEM platform, endpoint detection and response (EDR) telemetry, and identity provider logs simultaneously. No manual query-writing. No source-by-source approvals.

What comes back is a structured finding: "No indicators consistent with WMI-based lateral movement identified. Coverage gaps noted: cloud workload logs not queried (not available via current integration). SIEM and EDR coverage: complete."

She reads the finding and decides whether the coverage gap is worth flagging. Then she moves to the next hypothesis. Her active time was at the beginning and the end. Agents queried across the existing tool stack, leveraging 90+ integrations, without new data infrastructure or log normalization.

How AI Threat Hunting Compression Changes What's Possible for Your SOC

If a hypothesis-driven hunt costs up to 40 hours of analyst time, your program rationing isn't a strategy failure. It's a rational response to a real constraint. You have two experienced hunters. Each hunt takes days. Running more than a handful per quarter isn't realistic while keeping up with the alert queue.

The speed gains are already measurable even at the assisted tier. In a 2025 Cloud Security Alliance benchmark study, AI-assisted analysts completed SOC investigations 45 to 61% faster than manual methods and maintained higher accuracy across consecutive cases. Agentic execution pushes the compression further because analysts aren't in the loop at every step.

Dropzone's AI Threat Hunter, coming in Summer 2026, is designed to compress up to 40 hours of manual hunting to roughly one hour. When that compression holds in practice, the constraint changes. The bottleneck shifts from "do we have analyst time available to run this hunt?" to "do we have a hypothesis worth testing?"

A team that previously ran 10 to 15 hunts per quarter can now run that many per week. The count matters less than what it enables:

- Posture confirmation. Each clean hunt confirms that your SIEM coverage for that technique class is complete, or that your EDR telemetry reaches the endpoints you thought it did.

- Detection validation. Negative results build confidence. You're not guessing your controls work; you've tested them against a specific threat model.

- Faster gap closure. The finding tells you exactly what data was missing and why. Gaps get closed before the next hunt runs.

- Low-signal hypotheses get tested. When analyst time is the bottleneck, teams prioritize hunts they know are high-yield. When agent execution drops the cost to an hour, the calculus for testing a lower-confidence hypothesis changes.

Detection maturity builds through repetition, and repetition becomes feasible when execution scales. That's the argument for frequency as a lever, and it's the argument to make to your manager when the question is whether this kind of tooling justifies the investment.

What Happens to the Threat Hunter's Role in an Agentic SOC?

The skill that makes a threat hunter valuable isn't writing SIEM queries quickly or correlating logs across four tabs. Those are execution skills. What actually determines program quality is the mental model of attacker behavior: what technique an adversary would use in this environment, what evidence it would leave behind, and what it means when the evidence is absent.

AI agents execute the test. They don't originate the hypothesis or the attacker-behavioral intuition behind it. The strategic layer stays human by necessity. What shifts is where analysts spend their time:

- Before: 80% on queries and correlation, 20% on hypothesis development and threat modeling

- After: Agents handle execution. Analysts focus on hypothesis quality, threat model development, and acting on what agents surface

In the broader agentic SOC model, this is the organizing principle: AI agents execute detection and response work; analysts direct strategy. Threat hunting is one of the clearest examples of where that shift is practical rather than theoretical.

Where to Go from Here

The question isn't whether AI will change threat hunting. It already has. The question is whether your program is still operating under copilot-era constraints while the tooling has moved to agentic execution.

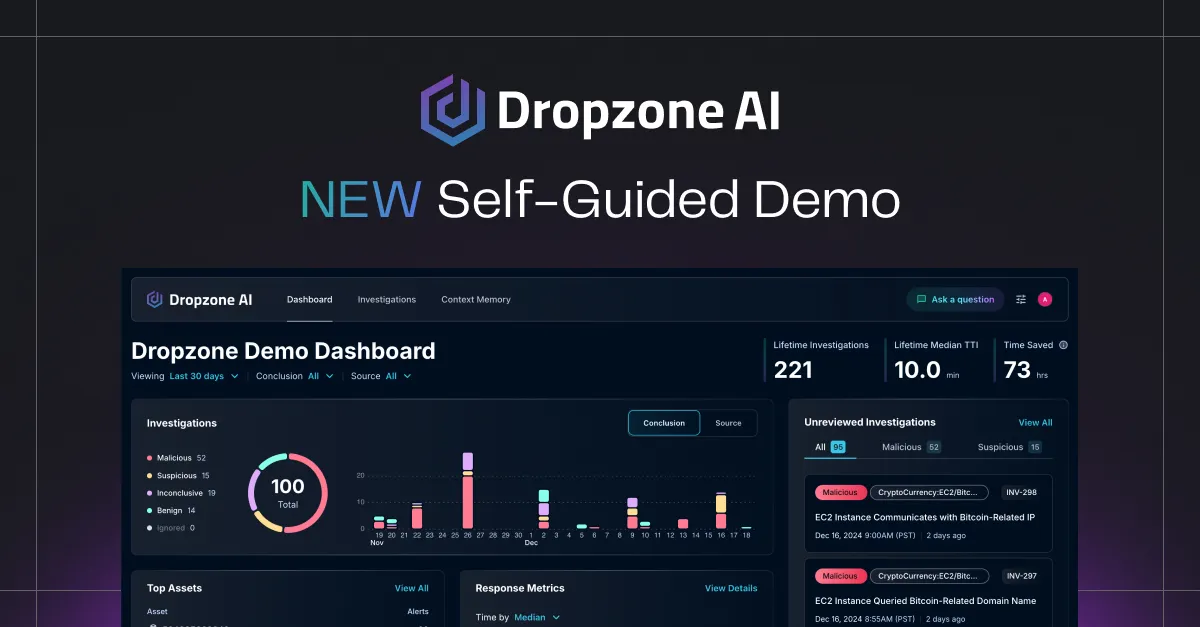

If you want to see how federated, hypothesis-driven hunts work across your existing tool stack, schedule a demo or try the self-guided demo to explore it on your own.

.png)