The SOC Manager's Dilemma

You run three hunts last quarter. All three come back clean. No confirmed indicators. No escalations. Nothing to show the CISO except a timesheet.

Then the question arrives: "We're spending analyst hours on this program. What is it producing?"

It's a fair question with an unfair frame. The assumption underneath it is that a hunt only produces value when it finds a threat. That assumption is wrong, and it's quietly undermining threat hunting programs that are working exactly as intended.

The problem isn't your program. The problem is your reporting.

Every hunt, regardless of outcome, produces at least two of three distinct value outputs. A hunt that finds a confirmed threat produces all three. The mistake most programs make is reporting only one: confirmed findings. The other two outputs, security posture validation and detection calibration, are being generated, then discarded. They never make it into a leadership conversation.

The fix isn't running better hunts. It's recognizing what your hunts are already telling you and translating that into language your organization can act on.

That starts with understanding what a clean hunt actually is.

Why a Clean Hunt Isn't a Failed Hunt

A threat hunt has one job: answer a question about your environment. The question takes the form of a hypothesis. "Are there indicators of lateral movement consistent with [adversary technique X] in our environment?" The hunt goes out and tests that hypothesis against your telemetry.

When the answer comes back negative, that's a valid answer. It's not a null result. "No indicators consistent with this threat model were found" is a complete sentence with a real meaning.

The framing problem happens when teams report that result as "we found nothing" instead of "we tested this hypothesis and our controls held." One version sounds like failure. The other is a security statement.

The shift changes the entire value conversation. Instead of "did you catch anyone?", the question becomes "what did you learn about your security posture?" Those are fundamentally different conversations, and only one of them accurately reflects the work that was done.

What does a "clean hunt" actually prove?

A clean result proves specific things. Not everything, but specific, reportable things:

- Your detection controls worked as designed against the tested threat model. If the hypothesis was testing for a known lateral movement technique and no indicators appeared, your controls for that technique are functioning.

- Your telemetry coverage was sufficient for the hypothesis tested. If you could execute the hunt, that means the data sources you needed were available, ingested, and queryable. That's a coverage confirmation, not a given.

- Your environment shows no indicators consistent with the adversary behavior you were hunting. As of this hunt, this threat class isn't present.

Each of these is a standalone, reportable security posture data point. None of them require a confirmed threat to have value. All of them belong in your program reporting.

Three Value Outputs That Define Threat Hunting ROI

Here's the framework. Every hunt produces outputs that fall into one or more of these three categories.

Output 1: Confirmed threat findings (the expected output)

This is the output everyone recognizes and the only one most programs formally report. Hunt found something. Analyst investigates. Case is opened or closed. This is important. But it's one metric, not the whole scorecard. Treating confirmed findings as the only measure of program value means a program that runs rigorous, frequent hunts in a clean environment will always look like it's underperforming. That's not a program failure. It's a measurement failure.

Output 2: Security posture validation

When a hunt tests a hypothesis and finds no attacker activity consistent with that hypothesis, you've produced a posture validation result. That result is reportable.

The deliverable looks like this: "We hunted for [threat class X] using [data sources Y and Z]. No indicators found. Controls validated against this technique."

For leadership, that translates to risk reduction language: "We've confirmed the attack surface for this lateral movement technique is covered by our current detection stack." That's a concrete security statement. It belongs in a quarterly report, a board deck, or an audit response.

Programs that run structured hunts and capture posture validation statements are building a documented record of what they've tested, when, and what the controls confirmed. That record is independent value from the hunting program, separate from any confirmed finding.

Output 3: Detection calibration and coverage mapping

This is the output that gets left on the floor most often, and it's arguably the most operationally useful one.

Every hunt surfaces operational data about your detection environment:

- Telemetry gaps. Data sources that were unavailable or not ingested for the hypothesis tested.

- Query failures. Queries that couldn't complete because a data source wasn't integrated.

- Missing log fields. Specific fields or event types absent from sources that should have contained them.

- Noisy correlation rules. Rules that produced too much noise to be useful for the technique being hunted.

Those aren't obstacles to the hunt. They're findings from the hunt.

The deliverable from Output 3 is a detection coverage map: a list of gaps identified during the hunt, prioritized by threat model relevance, with recommended actions. "We attempted to query [data source] for this technique and found it isn't currently integrated. Integrating it would cover [adversary technique] more completely."

For leadership: "We identified three detection coverage gaps during this hunt. Here's the remediation plan." That's a program improvement roadmap derived directly from hunting activity. It drives investment decisions even when no threats are found.

Output 3 is what separates a mature threat hunting program from one that's going through the motions. The program that captures and acts on coverage gaps is improving continuously. The program that only reports confirmed findings only knows it's working when something bad happens.

How to Report Hunt Value to Leadership (Even When You Find Nothing)

The three outputs only translate to program investment if you communicate them in business-relevant language. A posture validation statement buried in an internal Confluence page isn't a leadership artifact. It needs to be formatted for the conversation you're having.

A simple reporting table works. For each hunt, capture:

This table makes three things visible: what you tested, what you confirmed, and where the program needs to improve. The Chief Information Security Officer (CISO) or VP seeing this table isn't looking at a list of null results. They're looking at a documented security posture review and a prioritized improvement backlog.

This reporting gap is pervasive. The 2025 SANS SOC Survey found that 69% of SOCs still rely on manual or mostly manual processes to report metrics. When the reporting itself is manual, the three-output framework rarely makes it into a leadership conversation.

The common mistake is reporting hunt activity (how many hypotheses were tested, how many analyst hours were spent) rather than hunt outcomes (what was validated, what gaps were found, what the next action is). Activity metrics show that the program ran. Outcome metrics show what it produced.

What metrics should a threat hunting program track?

The right metrics capture all three output types. A strong hunting program tracks:

- Hypotheses tested per quarter. The activity baseline that shows the program is running.

- Threat classes validated. The posture validation output. How many distinct threat classes has your environment been tested against this quarter? This number should grow.

- Detection gaps identified and closed. The calibration output. How many coverage holes did hunts surface? How many have been remediated? The close rate matters as much as the count.

- Time-to-close on identified gaps. A quality metric for the program. Gaps found but never closed aren't improvements, they're deferred risk.

- Threats confirmed. The traditional metric. One data point among several, not the only one.

Programs that measure only confirmed threats will always look underperforming in a low-threat environment. Programs that measure all three outputs show continuous, compounding value.

For a deeper look at the specific KPIs and measurement frameworks that support these categories, see the complete guide to threat hunting program metrics.

How AI Changes the ROI Math for Threat Hunting

The three-output framework changes what a clean hunt is worth. But there's a second variable that changes the program economics just as significantly: how long a hunt takes.

Manual threat hunting is expensive. A thorough, federated hunt, executed by a human analyst across your Security Information and Event Management (SIEM) system, Endpoint Detection and Response (EDR) platform, and other connected tools, takes 10-20 hours of work. Not calendar time. Analyst time.

At that cost, most programs ration hunts. Monthly, if the team is disciplined. Quarterly, if capacity is tight. The program's posture validation and calibration output accumulates slowly because frequency is a constraint, not a choice.

When AI agents execute the federated search phase of a hunt, that 10-20 hours of work compresses to approximately one hour. The analyst defines the hypothesis and reviews findings. The agents execute the search across the tool stack simultaneously, correlate results, and surface indicators ranked by confidence. The analyst's judgment drives the strategy. AI handles the execution. A 2025 Cloud Security Alliance benchmark study of 148 analysts found that AI-augmented investigators completed SOC cases 45 to 61% faster than manual methods, with significantly less quality degradation as cases accumulated.

At one hour per hunt instead of 10-20, frequency becomes a lever. Programs that previously ran 12-15 hunts per year can run continuous, targeted hunts against the threat hypotheses that matter most right now. Each hunt adds a posture validation statement to the record and a coverage gap to the remediation backlog. That value accumulates not quarterly, but continuously.

The ROI math isn't just "more hunts." It's this: when each hunt produces documented posture validation and actionable calibration data, and you can run that hunt at machine scale rather than at analyst capacity, the program builds a security posture record that would have taken years to accumulate manually. For a broader look at how AI changes what proactive hunting looks like in the SOC, see this guide on proactive threat hunting.

What does AI-augmented threat hunting look like in production?

The operating model is straightforward: the analyst defines the hypothesis. AI agents execute the hunt.

Specifically, the agents query across your full tool stack simultaneously, pulling from your SIEM, EDR, and other connected data sources in parallel. They correlate results across tools, identify indicators consistent with the tested hypothesis, and return findings ranked by confidence for analyst review. The analyst reviews what was found, makes investigation and response decisions, and defines the next hypothesis.

The analyst is directing strategy. AI is executing search. That division of labor is the point. The analyst's expertise, intuition, and judgment are what make the hypothesis worth testing. Removing the analyst from hypothesis formation would remove the value of the hunt. Removing the analyst from the repetitive, time-consuming federated search phase is what changes the economics.

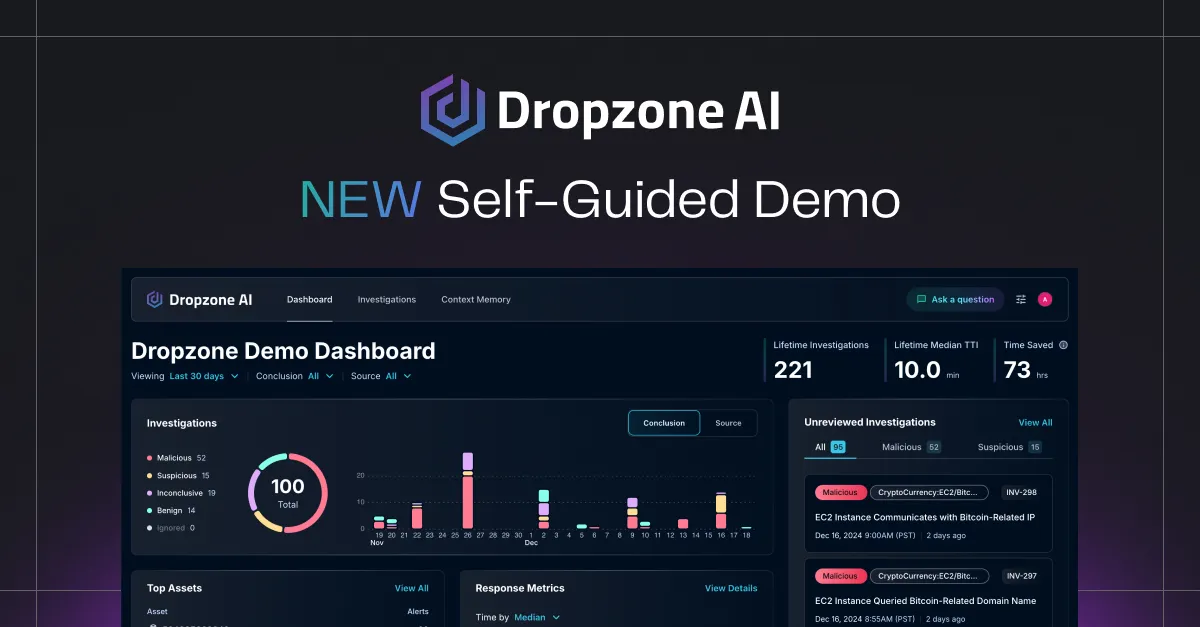

Dropzone AI's AI Threat Hunter, set to launch in Q2 2026, is built around this model. AI agents execute federated, hypothesis-driven hunts across the connected tool stack. The analyst receives surfaced findings ranked by confidence. All of the search work. None of the waiting.

Dropzone AI is a Gartner Cool Vendor for the Modern SOC, deployed in 300+ production environments. The AI Threat Hunter coming in Q2 2026 extends that same agent architecture to proactive hunting.

Key Takeaways

- A clean hunt isn't a null result. It's one of three reportable output types that every hunt produces.

- The three outputs are: confirmed threat findings, security posture validation, and detection calibration and coverage mapping.

- Programs that report only confirmed threats will always look underperforming in a low-threat environment. That's a measurement problem, not a program failure.

- A posture validation statement, properly formatted, is a concrete security deliverable. It belongs in quarterly reports, board decks, and audit responses.

- Detection calibration output from clean hunts builds a prioritized improvement roadmap. Coverage gaps found and closed are program improvements, not failed hunts.

- The reporting framework that matters: translate all three outputs into business language before the leadership conversation.

- When AI agents execute the federated search phase, the 10-20 hours of manual hunt work compresses to approximately one hour. Frequency shifts from a constraint to a choice.

- At machine-scale frequency, posture validation and calibration data accumulate continuously. The ROI case for a proactive hunting program changes entirely.

.png)