Threat hunting metrics are how security teams prove that hunting works and secure the resources to keep doing it. But most organizations struggle with measurement. The SANS 2025 Threat Hunting Survey found that organizations face persistent challenges measuring threat hunting effectiveness and demonstrating its business value to leadership. When 61% of teams already cite staffing shortages as their top barrier to hunting, the stakes for measurement are high: prove value or have already sparse funding cut to the bone or slashed entirely.

The problem is not a lack of data. Security teams can track dozens of metrics. The problem is knowing which ones actually reflect whether hunting is making the organization safer. This guide covers which threat hunting metrics drive security outcomes, which ones create noise, and how to match your measurement approach to your program's maturity level.

Why Most Threat Hunting Metrics Fail

The most common measurement mistake in threat hunting is counting activity instead of impact. Number of hunts completed, hours spent hunting, hypotheses generated: these metrics describe effort, not outcomes. The Splunk PEAK Threat Hunting Framework makes this distinction explicit: "hunts completed" is a poor metric because it says nothing about whether those hunts improved the organization's security posture.

Three specific measurement traps undermine most hunting programs:

- The "count everything" trap. Tracking volume (hunts per month, queries executed, reports filed) creates a false sense of productivity. A team running 20 shallow hunts is not necessarily more effective than a team running 5 deep ones.

- The vanity metrics trap. Metrics that look impressive in a report but do not connect to measurable improvements in security posture. "We investigated 500 hypotheses this quarter" means nothing without context on what those investigations found or fixed.

- The "nothing found" problem. A hunt that confirms expected behavior across critical systems is not a failure. It is a validated coverage baseline. Measuring hunts purely by threats detected penalizes the defensive validation work that makes hunting valuable in the first place.

The underlying issue is structural. 40% of security alerts are never investigated according to SACR's 2025 AI SOC Market Landscape report, and 46% of all alerts prove to be false positives per the SANS 2025 Detection & Response Survey. Hunting metrics must account for signal quality, not just signal volume.

Threat Hunting Metrics That Drive Security Outcomes

Effective threat hunting metrics fall into three categories: detection, coverage, and operations. Each measures a different dimension of whether hunting is making the organization safer.

How Do You Measure Threat Hunting Effectiveness?

Detection-based metrics measure whether hunts improve the organization's ability to find threats:

- New detections created or improved. The single most meaningful hunting metric. Every hunt should produce either a new detection rule, a refinement to an existing rule, or a confirmed gap. The Splunk PEAK Framework calls this the primary measure of hunting impact.

- ATT&CK detection coverage delta. The change in percentage of MITRE ATT&CK (Adversarial Tactics, Techniques, and Common Knowledge) techniques your detection rules cover, measured before and after hunting campaigns. This answers "did hunting improve our detection capability?" Enterprise SIEMs (Security Information and Event Management) have detection coverage for just 21% of ATT&CK techniques despite having enough telemetry to detect 90% or more, according to CardinalOps' 2025 annual report. Worse, 13% of existing SIEM rules are broken and will never trigger. Hunting fills the gap that static detection cannot.

- Findings yield rate. The ratio of hunts that produce actionable findings to total hunts conducted. Actionable findings include: new detection rules created, confirmed threats identified, validated coverage gaps, and confirmed baseline coverage (hunts that validate expected behavior across a defined scope). This separates productive hunting from busy hunting.

Coverage-based metrics measure the breadth and depth of what your hunting program examines:

- ATT&CK technique hunt coverage. A snapshot metric: the percentage of relevant ATT&CK techniques your hunting program has actively tested and validated this period, mapped by tactic and priority. This answers "what is our current hunting footprint?"

- Data source coverage rate. The percentage of available security data sources actively queried during hunts, including SIEM, endpoint detection and response (EDR), cloud, and identity systems. Blind spots in data access are blind spots in detection.

- Hypothesis coverage. Techniques and threat scenarios tested against techniques in scope. Tracks whether the hunting program is addressing the full threat model or concentrating on familiar ground.

Operational metrics measure efficiency and execution:

- Mean Time to Detect (MTTD) improvement attributable to hunting. How much faster are threats being identified as a direct result of hunt-derived detections?

- Hunt cadence. Hunts executed per week or month, tracked as a trend line. Cadence that drops signals resource constraints before they become critical.

- Hypothesis-to-conclusion time. The elapsed time from forming a hypothesis to reaching a validated conclusion. Separates investigation efficiency from investigation thoroughness.

What Is Mean Time to Detect and Why Does It Matter?

Mean Time to Detect (MTTD) measures the interval between a threat entering the environment and the security team identifying it. Mean Time to Respond (MTTR) measures the interval between detection and containment. Both are foundational SOC (Security Operations Center) metrics, but they apply differently to threat hunting than to alert-driven detection.

The benchmarks are clear. The IBM 2025 Cost of a Data Breach Report found the average breach lifecycle is 241 days (60 days to identify a breach and 181 days to contain it), with organizations deploying AI and automation extensively cutting that lifecycle by 80 days. Leading organizations achieve MTTR under 20 minutes and maintain false positive rates below 10%, according to Expel's SOC performance benchmarks. And 56% of organizations now prioritize MTTD and MTTR as their core effectiveness indicators, per Securonix's 2025 automation report.

For threat hunting specifically, MTTD is the metric that captures whether hunting is finding threats faster than passive detection alone. Track the delta: what is your MTTD for threats discovered through hunting versus threats discovered through automated alerting? That gap quantifies the hunting program's detection value.

Threat Hunting KPIs for Every Maturity Level

What Are the Best KPIs for a Threat Hunting Program?

The right metrics depend on where the program is. Measuring Level 5 KPIs (Key Performance Indicators) at Level 1 creates noise, not insight. Gartner's SOC maturity model (Minimal, Reactive, Proactive, Predictive) recommends evolving KPIs at each stage, starting with throughput and speed metrics before advancing to AI performance, confidence scoring, and analyst satisfaction metrics.

The progression follows a pattern. Early-stage programs measure whether hunting is happening at all. Mid-stage programs measure whether hunting is improving detection. Advanced programs measure how efficiently hunting scales and whether AI-augmented operations are outperforming manual baselines.

How AI Changes What You Measure

AI-augmented threat hunting does not just speed up existing metrics. It creates new ones. When AI handles the search and correlation phases of a hunt, the measurement framework shifts from tracking human effort to tracking human-plus-machine outcomes.

New metrics that emerge with AI-augmented hunting:

- Compression ratio. Manual hunting baseline time compared to AI-assisted time for the same scope. If a hypothesis that takes an analyst 10-20 hours to investigate manually takes approximately one hour with AI assistance, that is a 10-20x compression ratio, a direct measure of capacity gain.

- Automated hunt cadence. The number of hunts executed continuously without human initiation. Manual programs operate on cycles (weekly, monthly, quarterly). AI-augmented systems run hunts 24/7.

- Coverage expansion rate. How quickly the AI extends ATT&CK technique hunt coverage by automating searches across all connected data sources simultaneously.

- Investigation depth consistency. Whether investigations maintain the same rigor regardless of time of day, alert volume, or analyst availability. AI delivers the same depth at 2 AM that it delivers at 2 PM.

The data supports the shift. 60% of organizations using AI in their SOC have cut investigation time by at least 25%, according to the 2025 Pulse of the AI SOC report from Cybersecurity Insiders. Some organizations report even larger gains: an 85% reduction in manual alert investigation time and 5x faster MTTR are documented in production environments.

These AI-specific KPIs align with the framework in Chapter 5 of The AI SOC Team Playbook, which introduces four metrics designed for the Agentic SOC: Analyst Time Reclaimed (ATR), Proactive Hunts per Day, Time to Insight (TTI), and Attack Surface Coverage Percentage. Each measures a dimension that traditional metrics miss: how much analyst capacity was created, how much proactive coverage was achieved, how quickly the SOC reaches clarity, and how thoroughly the environment is examined.

For a deeper look at how AI is reshaping the practice of threat hunting, the companion blog covers speed, detection capability, intelligence operationalization, and accessibility.

Proving Threat Hunting ROI to Leadership

How Do You Prove Threat Hunting ROI to Leadership?

The metrics above measure whether hunting is working. Leadership cares about business outcomes. Translating between the two is where most hunting programs fail to secure sustained funding. The SANS 2025 survey found that without rigorous metrics and reporting, it is challenging to demonstrate business value, and securing funding for proactive security requires measurable outcomes.

Three frames that connect hunting metrics to board-level concerns:

- Risk reduction. Every percentage point of ATT&CK detection coverage gained through hunting is a measurable reduction in the attack surface. Frame it as: "Our hunting program reduced undetected exposure from 79% of adversary techniques to X%."

- Cost avoidance. The average data breach costs $4.44 million (IBM 2025). Organizations deploying AI and automation spend $1.9 million less per breach than those that do not. Frame hunting ROI as the cost of breaches prevented or contained faster.

- Capacity creation. Translate MTTD and MTTR improvements into analyst hours recovered. If AI-augmented hunting compresses 10-20 hours of manual work per hunt to one hour, that is analyst capacity redirected from repetitive search work to strategic security decisions.

A three-metric summary for executive reporting: what percentage of the attack surface is actively hunted (coverage), how fast threats are found that tools miss (speed), and what each hunt costs relative to what it finds (efficiency). These three metrics tell the story without requiring the audience to understand security operations.

Key Takeaways

- Measure outcomes (new detections created, ATT&CK detection coverage delta, MTTD improved), not effort (hunts completed, hours spent).

- Enterprise SIEMs cover only 21% of ATT&CK techniques despite having telemetry for 90%+. Threat hunting fills the detection gap.

- The average breach lifecycle is 241 days, with AI and automation cutting that by 80 days. MTTD improvement attributable to hunting is how hunting programs contribute to that reduction.

- Match metrics to maturity. Ad hoc programs track activity. Operational programs track findings yield. AI-augmented programs track compression ratio, automated cadence, and Analyst Time Reclaimed.

- Translate hunting metrics into business language (risk reduction, cost avoidance, capacity creation) to secure sustained investment.

Threat hunting measurement is evolving alongside the practice itself. The shift from manual, periodic hunting to AI-augmented, continuous operations does not just change how fast hunts execute. It changes what is worth measuring. The metrics that mattered when hunting was a quarterly exercise by a dedicated team are not the metrics that matter when AI agents execute hunts around the clock. Organizations that update their measurement frameworks to match their operational maturity and translate those metrics into language leadership understands are the ones that build hunting programs that last.

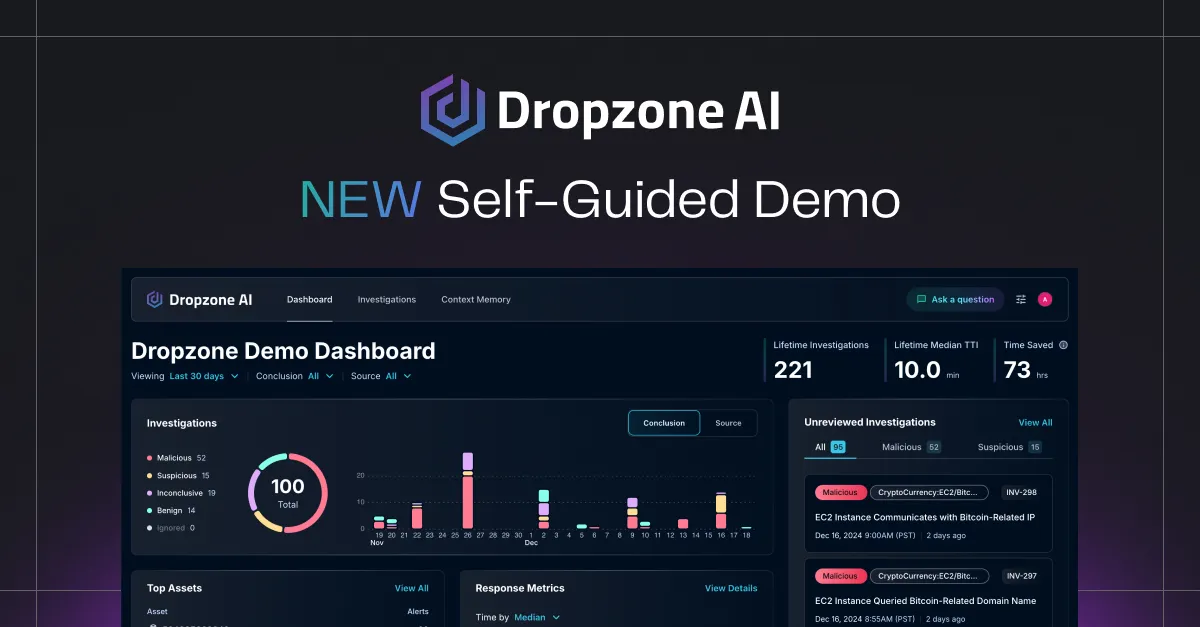

Dropzone AI's AI Threat Hunter, launching in Q2 2026, is designed to automate the search and analysis phases of proactive threat hunting so analysts can focus on forming hypotheses, directing investigations, and acting on findings.